The United States defense and financial sectors underwent a seismic shift this week as the federal government fundamentally reorganized its relationship with leading artificial intelligence providers while Wall Street’s elite further integrated digital assets into the national banking infrastructure. In a series of rapid-fire developments, the Department of Defense (DoD) effectively blacklisted Anthropic, a prominent AI safety-focused firm, labeling it a "supply-chain risk to national security," while simultaneously awarding a landmark contract to its primary competitor, OpenAI. Parallel to these geopolitical maneuvers, Morgan Stanley filed for a federal bank charter to provide comprehensive cryptocurrency services, signaling a definitive end to the era of institutional hesitation regarding digital assets.

The Anthropic Designation and the "Supply Chain Risk" Label

The controversy began late Friday when Secretary of Defense Pete Hegseth issued a formal declaration identifying Anthropic as a "Supply-Chain Risk to National Security." This designation is historically rare for domestic technology companies, typically reserved for foreign entities or firms under the influence of adversarial nations, such as Huawei or ZTE. The ruling carries immediate and severe implications for the federal procurement landscape, requiring all defense contractors to certify that they are not utilizing Anthropic’s models or integrated systems within their workflows.

Following the Pentagon’s announcement, President Donald Trump issued an executive directive to every federal agency, mandating an immediate cessation of Anthropic technology use. While the order is effective immediately, a six-month transition period has been established for agencies with deep-seated dependencies on Anthropic’s systems to migrate their data and operations to approved alternatives.

The rationale behind the "supply chain risk" label remains partially shrouded in classified assessments, but public statements from administration officials suggest a misalignment between Anthropic’s corporate governance and the military’s strategic objectives. Anthropic, founded by former OpenAI executives with a focus on "Constitutional AI" and safety, has historically maintained a cautious approach toward military applications.

Anthropic CEO Responds to "Unprecedented" Decision

Dario Amodei, CEO of Anthropic, broke his silence during an interview with CBS News, describing the government’s move as "unprecedented" and fundamentally at odds with the company’s history of cooperation. According to Amodei, Anthropic has been a consistent partner to the Department of Defense, agreeing to nearly every AI use case proposed by defense officials. However, the company drew a firm line at two specific areas: the development of fully autonomous weapons platforms and the implementation of mass domestic surveillance systems.

Amodei framed the company’s refusal as a defense of American values rather than an act of obstruction. He emphasized that the right of military officers to maintain human oversight over lethal decisions and the right of citizens to be free from government over-monitoring are foundational to the American democratic experiment. "These are things that are fundamental to Americans," Amodei stated. "The right not to be spied on by the government, the right for our military officers to make decisions about war themselves and not turn it over completely to a machine."

The fallout from this decision has sparked intense debate within the technology sector. Critics of the administration’s move argue that penalizing a domestic company for adhering to ethical safety guidelines could stifle innovation and force a "race to the bottom" in AI safety standards. Conversely, proponents of the move suggest that in an era of global AI competition, the military requires partners that are fully committed to the integration of AI across all combat and intelligence domains without reservation.

OpenAI Secures Classified Military Integration

In a timing sequence that many industry analysts described as "calculated," OpenAI announced a major contract with the Department of Defense just hours after the Anthropic restrictions were made public. OpenAI CEO Sam Altman confirmed via social media that the company reached an agreement to deploy its generative AI models inside the Pentagon’s highly restricted "classified networks."

This deal marks a significant pivot for OpenAI, which famously removed language from its terms of service earlier this year that previously prohibited the use of its technology for "military and warfare" purposes. Altman’s statement emphasized a collaborative approach to safety, noting that the Department of Defense demonstrated a "deep respect for safety" and a willingness to operate within the specific limits defined by OpenAI’s internal governance.

The deployment of OpenAI models on classified networks suggests that the Pentagon intends to use these tools for high-level strategic analysis, intelligence synthesis, and perhaps operational planning. By hosting these models on secure, air-gapped, or classified intranets, the DoD aims to leverage the power of Large Language Models (LLMs) while mitigating the risks of data leakage or external manipulation.

Timeline of the "Turbulent Friday" AI Realignment

The speed of the transition from Anthropic to OpenAI suggests a coordinated shift in federal AI policy. The following chronology outlines the key events of February 28:

- 09:00 AM EST: Reports circulate regarding a pending "national security" review of domestic AI firms with restrictive military use policies.

- 01:30 PM EST: Secretary of Defense Pete Hegseth officially labels Anthropic a "Supply-Chain Risk to National Security," effectively barring them from the defense ecosystem.

- 02:15 PM EST: President Donald Trump issues an executive order extending the ban on Anthropic technology to all federal agencies, citing the need for "total alignment" in national security infrastructure.

- 04:45 PM EST: OpenAI CEO Sam Altman announces the signing of a contract to provide AI models for the Pentagon’s classified networks.

- 06:00 PM EST: Anthropic CEO Dario Amodei appears on national media to defend the company’s ethical stance and decry the "unprecedented" nature of the government’s designation.

Morgan Stanley’s Strategic Entry into Crypto Banking

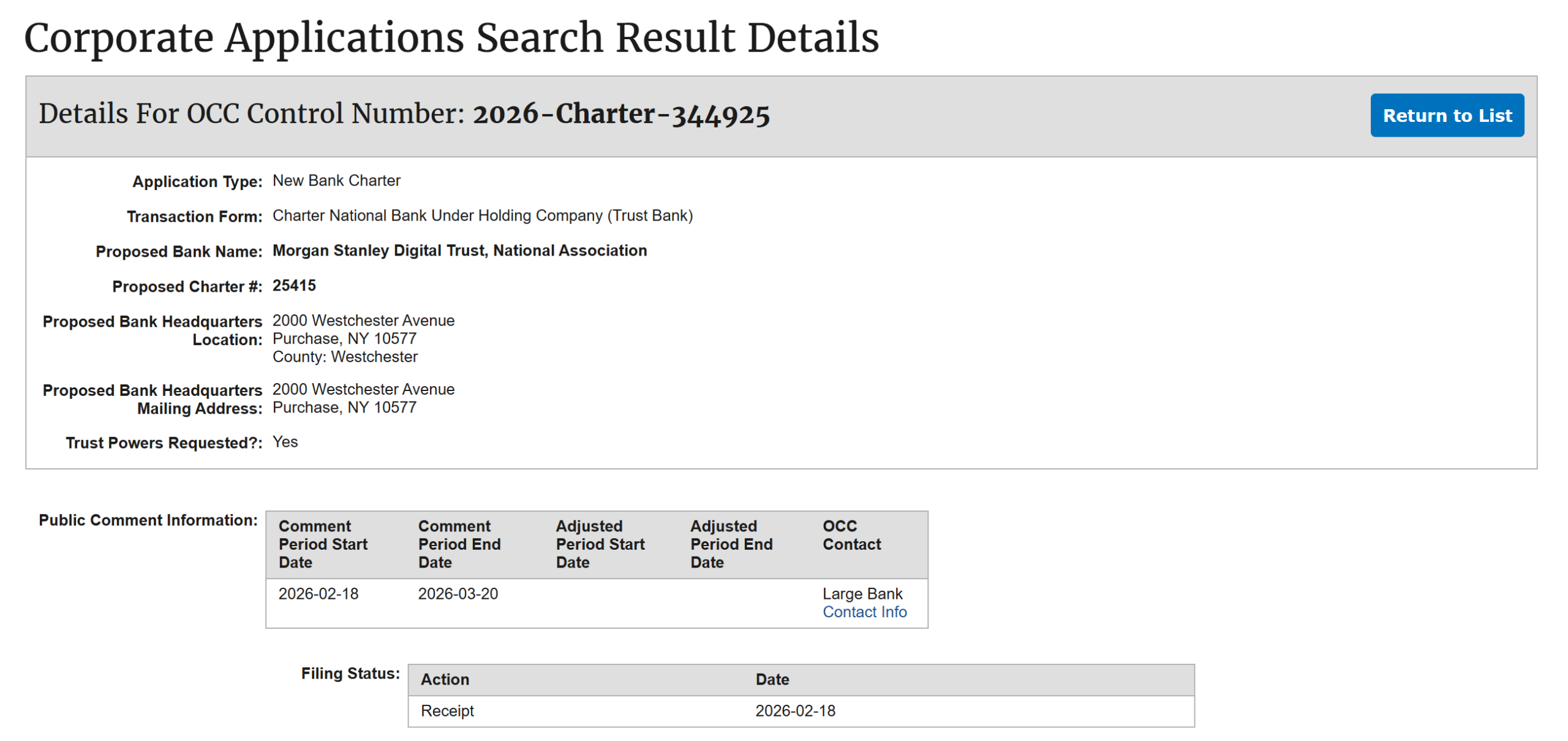

While the AI sector grappled with geopolitical realignments, the financial world witnessed a milestone in the institutionalization of digital assets. Morgan Stanley, a titan of Wall Street managing over $1.5 trillion in assets under management, has officially applied for a de novo national trust bank charter with the Office of the Comptroller of the Currency (OCC).

The application, filed under the name "Morgan Stanley Digital Trust, National Association," was received by the OCC on February 18, though full details of the business plan emerged only this Friday. The move represents a significant escalation of Morgan Stanley’s involvement in the crypto space, moving beyond merely offering Bitcoin ETFs to its wealth management clients.

A national trust bank charter would allow Morgan Stanley to act as a qualified custodian for digital assets, a role currently dominated by specialized firms like Coinbase Custody or Anchorage Digital. According to filings, the new subsidiary intends to provide a full suite of services, including:

- Direct Custody: Holding private keys for Bitcoin, Ethereum, and other digital assets for institutional and high-net-worth clients.

- Execution Services: Facilitating the purchase, sale, and swap of digital assets directly through the bank’s infrastructure.

- Staking Services: Allowing clients to earn rewards on their proof-of-stake assets (such as Ethereum) while maintaining them within the bank’s regulated environment.

- Transfer Agency: Managing the movement of digital assets between institutional entities.

The Institutional Shift and Regulatory Context

Morgan Stanley’s move follows a broader trend of major financial institutions seeking more robust regulatory frameworks for their digital asset operations. By pursuing a national charter through the OCC, Morgan Stanley bypasses the fragmented state-by-state licensing process (such as New York’s BitLicense) in favor of a uniform federal standard.

This development is particularly significant given the history of the OCC’s stance on crypto. Under previous administrations, the OCC has fluctuated between encouraging banks to provide crypto services and issuing "interpretive letters" that heightened the barrier to entry. Morgan Stanley’s application suggests a high level of confidence in the current regulatory environment’s permanence and a belief that institutional demand for crypto has reached a critical mass that requires internal, rather than outsourced, solutions.

Industry analysts suggest that Morgan Stanley’s entry could trigger a "domino effect" among other Tier-1 banks. While Goldman Sachs and JPMorgan Chase have explored various blockchain initiatives, Morgan Stanley’s move to create a dedicated National Association for digital trusts sets a new benchmark for how legacy banks interact with the crypto economy.

Broader Impact and Implications for the Future

The convergence of these events highlights a broader theme: the tightening integration of emerging technologies into the core structures of national power, whether through the military-industrial complex or the federal banking system.

For the AI industry, the "Anthropic Precedent" serves as a warning that ethical "red lines" regarding military use may carry heavy commercial and regulatory costs in the current political climate. The government’s preference for OpenAI suggests that the path to federal dominance for AI firms now requires a level of transparency and cooperation with defense agencies that exceeds previous industry norms. This may lead to a bifurcation of the AI market, with some firms focusing on "civilian-only" models while others become deeply integrated "defense-tech" giants.

In the financial sector, Morgan Stanley’s charter application marks the end of the "experimental" phase of institutional crypto. By seeking to trade, stake, and custody assets under a national bank charter, the firm is treating Bitcoin and other digital assets with the same regulatory seriousness as equities or bonds. This move is expected to provide the necessary "on-ramps" for pension funds and insurance companies that have previously been sidelined by the lack of a major Wall Street custodian.

As these two stories unfold, they underscore a transformative period in American policy where the boundaries between private innovation, national security, and global finance are being redrawn. The decisions made this week by the Pentagon and Morgan Stanley will likely resonate for years, defining the parameters of AI ethics and the legitimacy of the digital economy.