The landscape of international finance is currently undergoing a dual transformation as the rapid adoption of digital currencies converges with the terrifyingly swift advancement of artificial intelligence. While blockchain technology promises transparency and security, a new "shadowy threat" has emerged in the form of high-fidelity deepfakes—AI-generated audio and video impersonations that are now infiltrating high-stakes global cryptocurrency negotiations. Diplomats, central bankers, and financial regulators, who have increasingly relied on virtual platforms for international coordination, now find themselves at the center of a sophisticated technological arms race. As the lines between authentic representation and synthetic deception blur, the integrity of the global financial order faces an unprecedented challenge that necessitates a total overhaul of diplomatic security protocols.

The Convergence of Generative AI and Global Crypto Governance

The rise of synthetic envoys marks a significant escalation in the history of cyber-espionage. In the current geopolitical climate, cryptocurrency is no longer a fringe asset class; it is a pillar of modern economic strategy, involving discussions on central bank digital currencies (CBDCs), cross-border payment rails, and the regulation of stablecoins. These negotiations often occur behind closed doors or via encrypted video conferencing, providing the perfect environment for malicious actors to deploy generative AI.

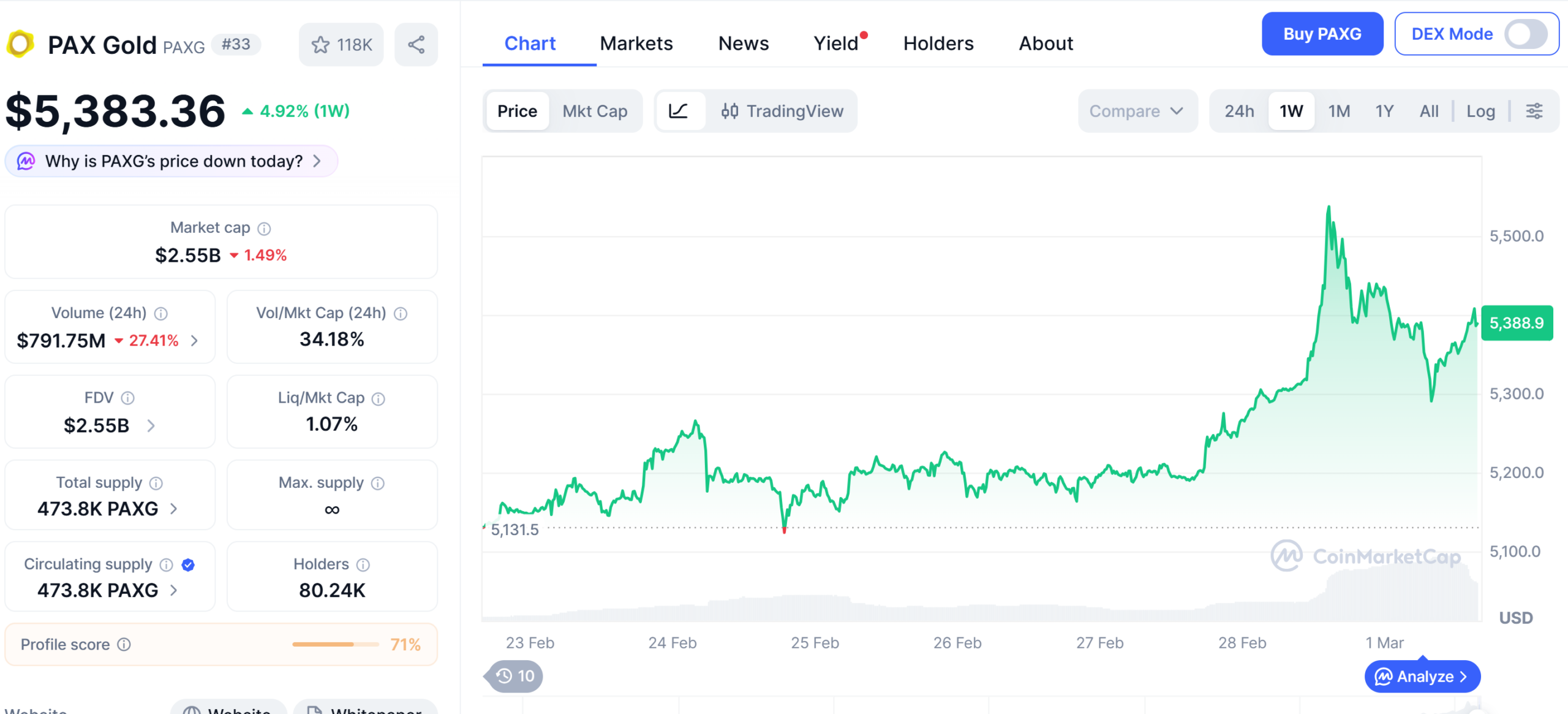

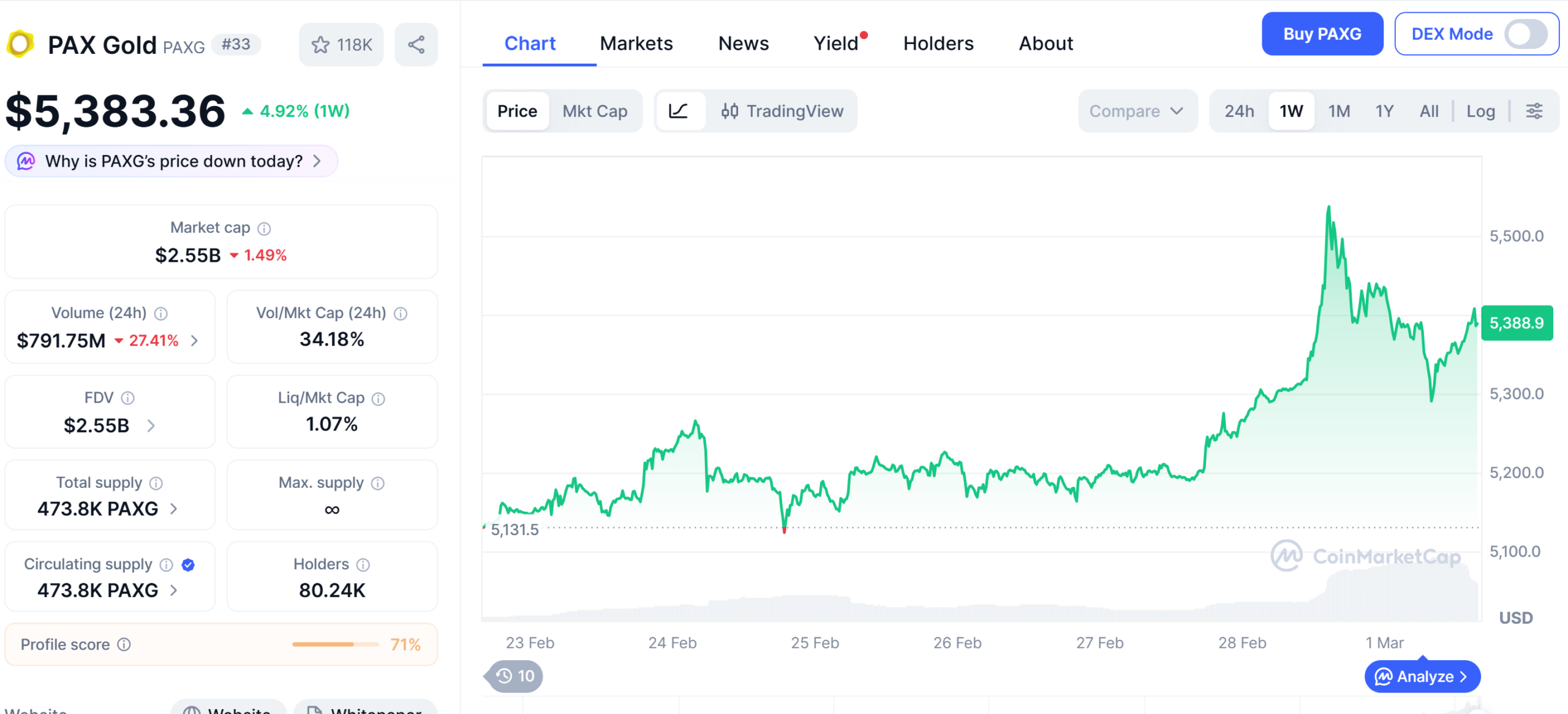

Generative Adversarial Networks (GANs), the underlying technology for deepfakes, have reached a level of sophistication where they can mimic not only the likeness of a person but also their unique vocal cadences, micro-expressions, and linguistic idiosyncrasies. In a virtual summit setting, a deepfake of a high-ranking official could theoretically propose a "poison pill" amendment to a regulatory framework or endorse a specific blockchain protocol that contains backdoors for illicit state actors. This is no longer the realm of speculative fiction; it is a clear and present danger to the $2 trillion cryptocurrency market and the stability of the traditional financial systems that are increasingly intertwined with it.

A Chronology of Synthetic Deception in Finance

To understand the current threat, one must look at the rapid evolution of AI-driven impersonation over the last decade. The timeline reveals a transition from rudimentary face-swapping to industrial-grade financial sabotage.

- 2017-2019: The Foundation of Deepfakes. The term "deepfake" enters the public lexicon, primarily through low-quality face-swaps in entertainment. However, cybersecurity experts begin warning that the technology could eventually be used for "CEO fraud" or Business Email Compromise (BEC).

- 2020: The First Major Financial Strike. In a landmark case, a bank manager in Hong Kong authorized $35 million in transfers after receiving a call from what he believed was a company director. The attackers used "deep voice" technology to clone the director’s speech, marking the first high-value success of audio deepfakes in finance.

- 2021-2022: Expansion into Virtual Meetings. As the COVID-19 pandemic moved diplomacy to Zoom and Teams, hackers began testing live video manipulation. Early attempts were often caught due to "glitching" or unnatural lighting, but they signaled a shift in strategy toward real-time deception.

- 2023: The Generative AI Boom. The release of high-level large language models and video generation tools democratized deepfake creation. Tools that once required massive computing power became accessible to any state-sponsored or independent hacking group.

- 2024: The Targeting of Crypto Diplomacy. Reports begin to emerge of "synthetic envoys" appearing in preliminary working groups for international crypto standards, specifically targeting forums where decentralized finance (DeFi) and anti-money laundering (AML) policies are debated.

Technical Mechanics and Data-Driven Threats

The efficacy of deepfakes in the crypto sector is backed by sobering data regarding human perception and the limitations of current detection software. According to research from University College London, humans are only able to detect deepfake speech approximately 73% of the time, a number that drops significantly during stressful or fast-paced negotiations.

The creation process involves two competing neural networks: the "Generator," which creates the fake image or audio, and the "Discriminator," which tries to identify the fake. As they iterate, the Generator becomes so proficient that the Discriminator can no longer distinguish between the real and the synthetic. For a diplomat or a finance minister, this means that their entire public history—years of televised interviews, speeches at the World Economic Forum, and social media videos—serves as a massive dataset for training a perfect digital doppelganger.

Furthermore, the "cost of attack" has plummeted. While a high-quality deepfake used to require weeks of processing, modern software can now render realistic avatars in near real-time with less than 60 seconds of reference audio. This speed allows attackers to pivot mid-conversation, responding to questions with synthetic voices that carry the authority of a world-renowned economist or a regulatory head.

Case Studies: The European Union and APEC Incidents

Recent real-world incidents have sent shockwaves through the diplomatic community. One of the most notable cases involved the European Union’s deliberations on the digital euro. During a sensitive virtual session involving economic advisors, an attendee appeared who perfectly resembled a senior advisor to a major European central bank. The impostor used the platform to advocate for reduced oversight on decentralized exchange platforms, arguing that "regulatory friction" would stifle innovation. The deception was only uncovered when a human colleague noticed the advisor used a specific phrase—"unbounded liquidity"—that was inconsistent with the advisor’s well-known conservative fiscal stance.

In another instance involving the Asia-Pacific Economic Cooperation (APEC), a deepfake of a prominent regional economist was used during a discussion on sustainable crypto mining. The digital avatar pushed for a resolution that would have granted significant tax breaks to mining operations in a specific jurisdiction known for high carbon emissions. The participants became suspicious when the background lighting of the "economist’s" video feed did not match the time of day in his supposed location. These incidents illustrate that while the AI is becoming nearly perfect, "analog" discrepancies are often the only remaining line of defense.

Official Responses and Regulatory Alarm

The international community is beginning to react to this "identity crisis" in digital diplomacy. The Financial Action Task Force (FATF), the global money laundering and terrorist financing watchdog, has recently updated its guidance to warn member nations about the use of AI in bypassing "Know Your Customer" (KYC) and "Customer Due Diligence" (CDD) protocols.

In a recent statement, a spokesperson for the European Central Bank (ECB) noted, "The integrity of our communication is as vital as the security of our currency. If we cannot verify who is on the other side of the screen, the entire framework of international cooperation is at risk." Similarly, Interpol has issued a "Purple Notice" regarding the use of deepfakes in social engineering, emphasizing that the financial sector, and specifically the crypto-asset industry, is a primary target due to the irreversible nature of blockchain transactions.

National security agencies are also weighing in. The U.S. Cybersecurity and Infrastructure Security Agency (CISA) has categorized deepfakes as a "significant threat to the digital ecosystem," calling for the development of "content provenance" standards that would track the origin of every digital file and stream.

Strategic Implications: The Vulnerability of Virtual Diplomacy

The implications of this technology extend far beyond simple fraud; they touch upon the core of geopolitical trust. Cryptocurrency negotiations are often a proxy for broader economic competition between nations. If a nation can use AI to disrupt a rival’s crypto-regulatory framework, it can gain a significant competitive advantage in the future of digital trade.

The "trust gap" created by deepfakes may force a regression in diplomatic methods. We may see a return to "physical-only" high-level summits for sensitive financial discussions, effectively slowing down the pace of global innovation. For the crypto industry, which prides itself on being "digital-first," this represents a significant cultural and operational setback.

Moreover, the psychological impact of "liar’s dividend" cannot be ignored. This is a phenomenon where real people can dismiss their own genuine mistakes or controversial statements as "deepfakes," leading to a complete breakdown in accountability. In the context of a central bank governor making a market-moving statement, the ability to later claim the video was an AI forgery could create unprecedented market volatility and legal chaos.

Safeguarding the Future: Technical and Policy Solutions

To combat the deepfake menace, a multi-layered approach is required, combining cutting-edge technology with traditional diplomatic rigor.

- Blockchain-Based Authentication: Ironically, the technology being negotiated may provide the solution. By using decentralized identity (DID) solutions, negotiators can sign their video streams with private cryptographic keys. This ensures that the viewer can verify the source of the stream on an immutable ledger.

- Liveness Detection and Biometrics: Virtual meeting platforms must integrate real-time liveness detection that looks for "biological markers" that AI cannot yet perfectly replicate, such as blood flow patterns in the face (PPG) or the microscopic tremors of the human voice.

- Encrypted and Air-Gapped Channels: For the most sensitive crypto negotiations, the use of standard commercial video software may need to be abandoned in favor of proprietary, air-gapped communication networks with hardware-based encryption.

- Diplomatic Training: Modern diplomats must be trained as "digital detectives." Recognizing the "uncanny valley" of AI—such as unnatural blinking patterns, audio-visual desynchronization, or strange reflections in the eyes—is now a core competency for any official involved in international finance.

Conclusion: Preserving Trust in an Augmented World

As we move deeper into the decade, the intersection of AI and cryptocurrency will continue to produce both immense value and existential risks. The emergence of deepfakes in crypto negotiations is a reminder that in a virtual world, "seeing is no longer believing." The international community must act with urgency to establish new norms of digital trust.

Preserving the authenticity of global financial discourse is not merely a technical challenge; it is a prerequisite for the continued evolution of the global economy. By embracing blockchain-verified identities and robust AI-resistant protocols, the world can ensure that the future of digital currency is built on a foundation of genuine human cooperation, rather than synthetic deception. The turbulent waters of digital diplomacy require a new kind of vigilance—one where technology is used not just to innovate, but to protect the very essence of truth in the digital age.