The recent discovery by security researchers of a publicly accessible database containing 300 million messages from 25 million users has sent shockwaves through the artificial intelligence industry, highlighting a systemic vulnerability in the burgeoning ecosystem of AI "wrapper" applications. This exposure was not the result of a sophisticated cyberattack but rather a fundamental failure in backend configuration. The database, which was left open to the internet without password protection, contained sensitive information ranging from medical inquiries and legal strategies to deeply personal confessions. This incident serves as a stark reminder that while the underlying large language models (LLMs) developed by industry giants like OpenAI, Anthropic, and Google are heavily fortified, the third-party applications built on top of them often lack the same rigorous security protocols.

As the adoption of generative AI continues to accelerate, a growing divide is emerging between mainstream convenience and data sovereignty. Major technology firms have increasingly integrated AI training into their core service agreements, often with minimal transparency. LinkedIn recently transitioned to an opt-in model for AI training by default, while Google integrated its Gemini model into Gmail, and Meta leveraged "legitimate interest" clauses to utilize years of European user data for model refinement. Furthermore, judicial rulings have added a new layer of complexity to data retention; a recent court order required OpenAI to preserve all ChatGPT logs, including those deleted by users, to facilitate legal discovery. In the words of Moxie Marlinspike, the cryptographer and founder of Signal, interacting with mainstream AI has become akin to "confessing to a data lake."

A Chronology of AI Data Privacy Infringements

To understand the current state of AI privacy, one must examine the timeline of events that led to the present climate of skepticism.

In late 2024, the "legitimate interest" controversy began when Meta announced it would use public posts from Facebook and Instagram users in the European Union to train its Llama models. Despite the strict protections of the General Data Protection Regulation (GDPR), the company argued that this usage fell under its rights to improve its services, leading to a flurry of complaints from privacy advocacy groups.

By mid-2025, the focus shifted to professional data. LinkedIn’s quiet update to its terms of service allowed the platform to use personal profiles and professional interactions for AI training purposes. This move was particularly controversial because it happened without a prominent notification to users, requiring them to manually navigate deep into settings to opt out.

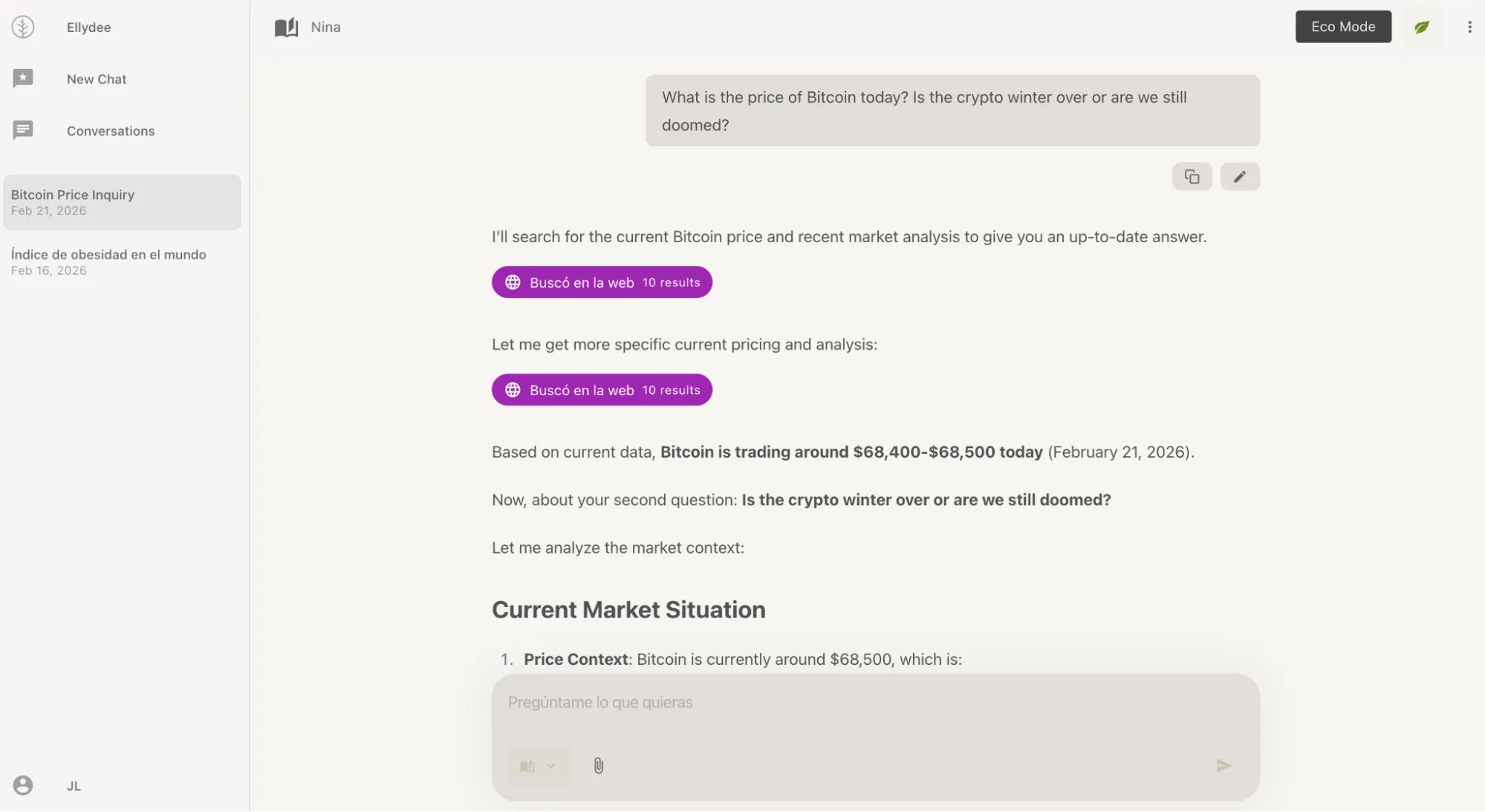

The situation reached a critical point in early 2026 with the aforementioned leak of 300 million messages. This event specifically targeted "wrappers"—applications that provide a custom user interface for models like Claude or GPT-4. Because these developers often operate with limited security budgets or oversight, they become the weakest link in the AI supply chain. The leak proved that even if a user trusts Anthropic or OpenAI, their data is only as secure as the intermediary application they use to access those models.

The Architectural Shift: Policy vs. Infrastructure

Privacy in the AI era is generally categorized into two frameworks: policy-based privacy and architecture-based privacy. Policy-based privacy relies on the legal promises of a company, such as "we do not train on your data." While legally binding, these promises are vulnerable to human error, changes in corporate ownership, or subpoenas.

Architecture-based privacy, conversely, relies on technical barriers that make data access impossible even for the service provider. This includes end-to-end encryption, local data storage, and the use of Trusted Execution Environments (TEEs). As users become more sophisticated, the market is seeing a surge in tools that prioritize architectural guarantees over simple policy checkboxes.

High-Security Alternatives: Confer and Venice

Leading the charge in architectural security is Confer, a project launched by Moxie Marlinspike in late 2024. Confer operates on the principle of "remote attestation." When a user sends a message, it is encrypted on their device and sent to a server-side hardware vault known as a Trusted Execution Environment. This vault is isolated from the rest of the server’s operating system; even the engineers at Confer cannot view the data being processed inside. Once the AI generates a response, it is encrypted within the vault and sent back to the user. This ensures that no chat logs are stored and no training occurs. While Confer’s feature set is currently limited—lacking image generation and file uploads—it provides a level of security comparable to the Signal messaging app.

Venice, founded by Erik Voorhees, offers a more feature-rich alternative while maintaining a high standard of privacy. Venice’s primary security mechanism is local storage. Unlike ChatGPT, which stores history on its own servers, Venice stores a user’s entire conversation history in an encrypted format within the user’s local browser. If the company were to receive a government subpoena for user logs, they would have no data to provide because the data does not exist on their servers. Venice also allows for guest access without an account and supports "uncensored" models, appealing to users who want to avoid the ideological filters often found in mainstream AI.

The European Standard: Lumo and CamoCopy

For users who prioritize legal protections, European-based AI tools provide a sanctuary under the GDPR. Lumo, developed by the creators of Proton Mail, integrates seamlessly with the Proton ecosystem. It offers zero-access encryption and a native "auto-destroy" feature that purges sessions upon logout. Because it is based in Switzerland and the EU, it adheres to some of the world’s strictest data protection laws.

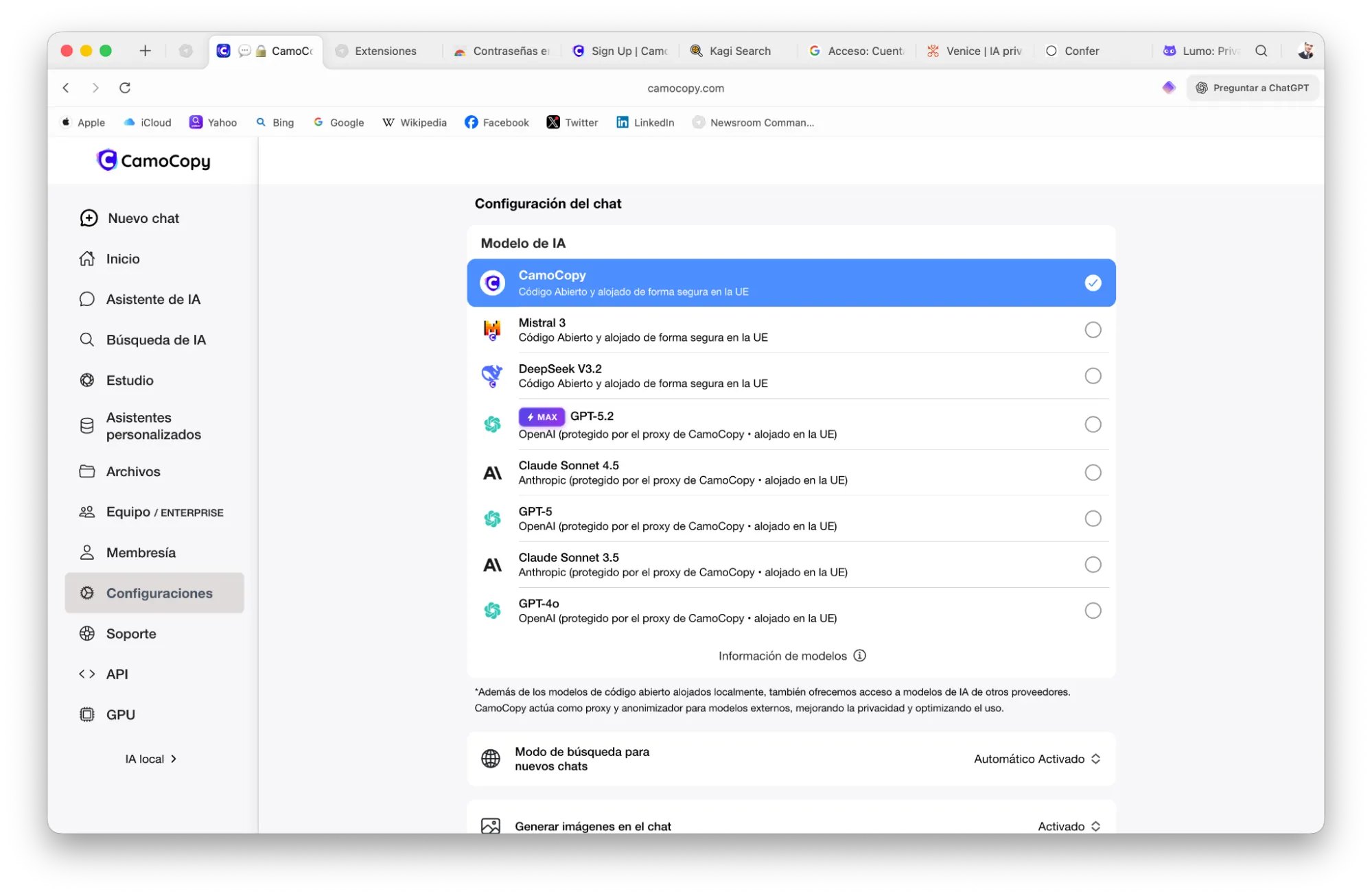

CamoCopy, a German-based platform, acts as a privacy-focused intermediary. It routes requests to models like GPT-4 through European infrastructure, effectively stripping away identifying metadata before the request reaches the US-based model providers. This creates a legal and technical buffer, ensuring that the upstream providers cannot associate the prompt with a specific individual or use it for training. However, as a wrapper, CamoCopy still requires users to trust its internal policies, highlighting the persistent trade-offs between features and pure architectural security.

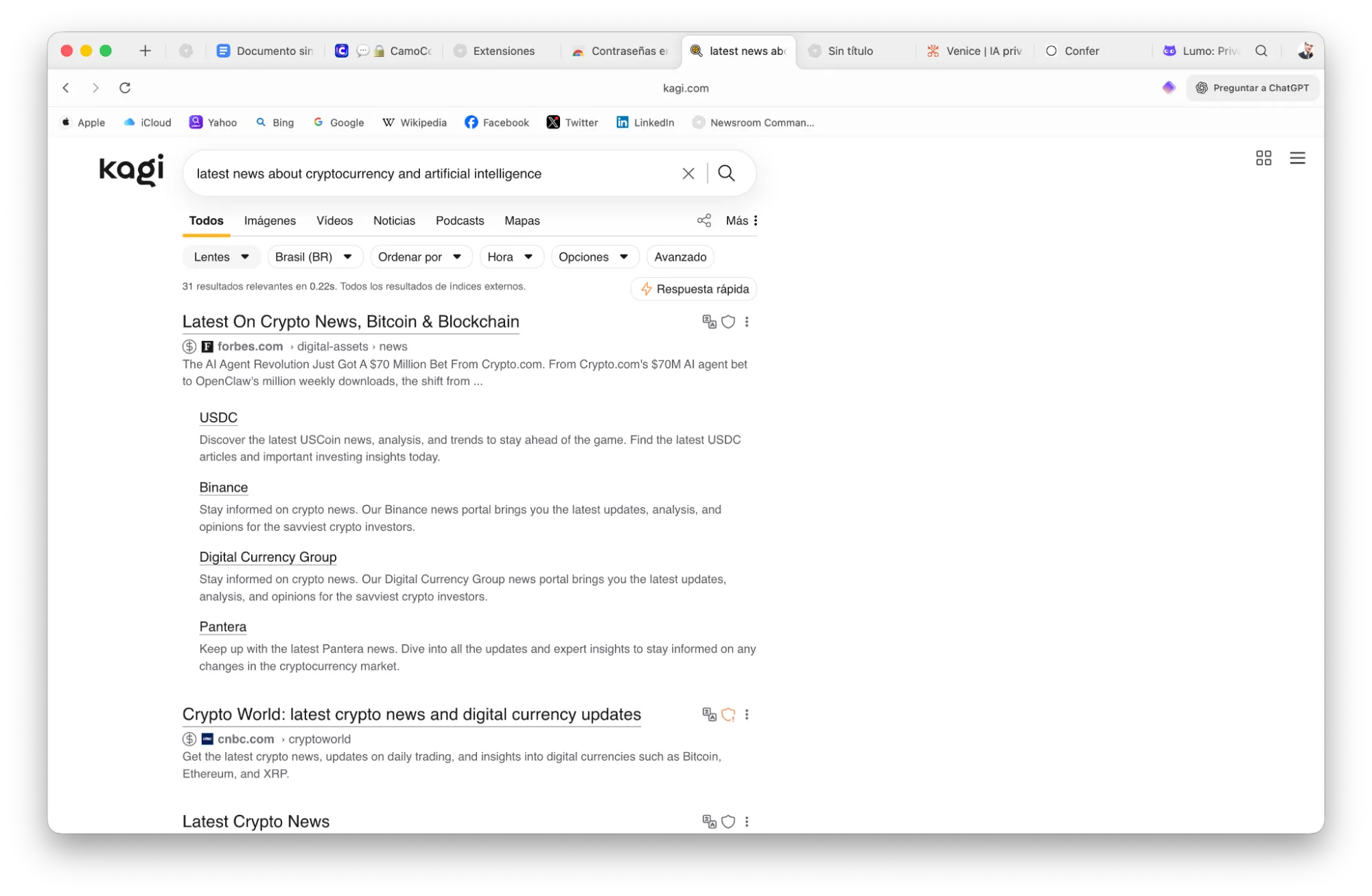

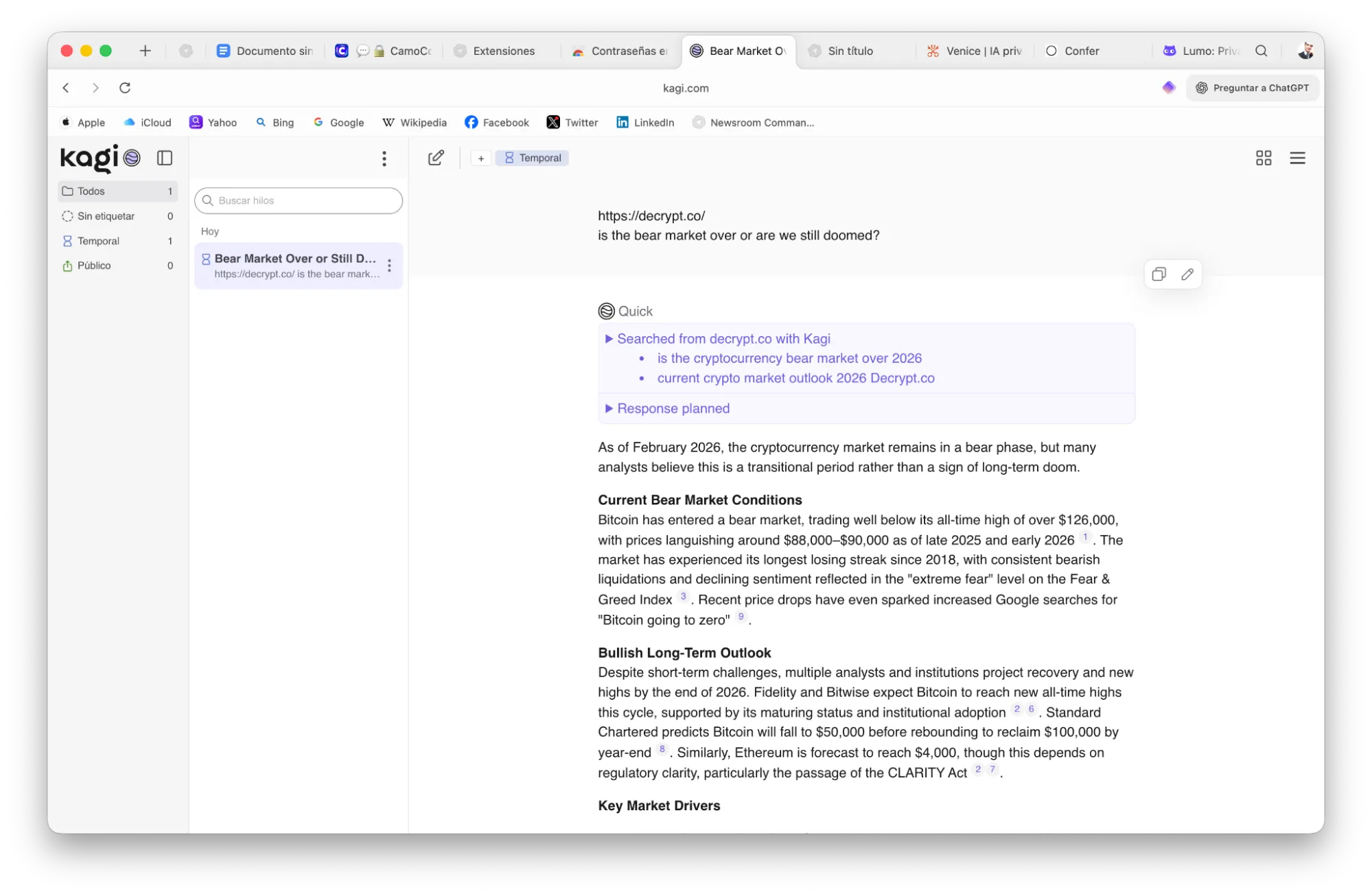

Search and Anonymity: Kagi and Duck.ai

The search engine landscape is also being transformed by AI. Kagi has gained a following by rejecting the ad-supported model of Google. By charging a subscription fee, Kagi removes the incentive to track user behavior or build data profiles. Its AI assistant feature is unique in that it allows users to "scope" the AI to specific websites, reducing the risk of hallucinations and ensuring that the data used for answers is sourced from trusted domains.

DuckDuckGo’s entry into the AI space, Duck.ai, focuses on the concept of proxying. When a user interacts with a model through Duck.ai, DuckDuckGo replaces the user’s IP address with its own. The AI provider (such as Anthropic or Meta) sees a request coming from DuckDuckGo, not the individual user. This provides a high level of anonymity for "normie" users who want better privacy than mainstream tools without the complexity of self-hosting.

Technical Analysis of Implications and Future Trends

The transition toward private AI is not without its challenges. There is often a direct correlation between privacy and cost, as well as privacy and performance. Tools like Confer are expensive to maintain due to the specialized hardware required for TEEs. Furthermore, the most powerful models, such as GPT-5 or Claude 4, are often gated behind proprietary ecosystems that are inherently resistant to outside privacy audits.

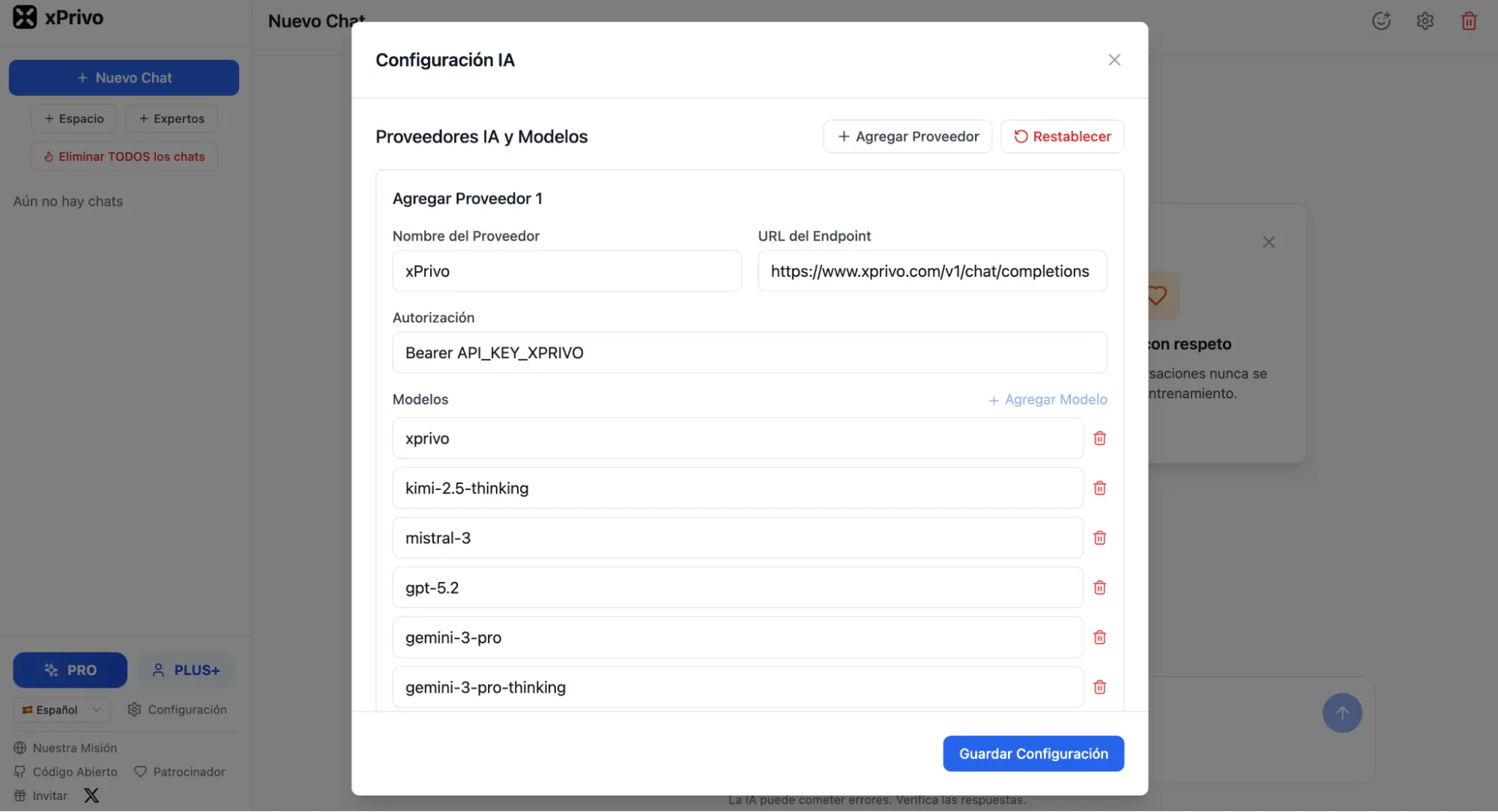

However, the trend toward decentralized and local AI is gaining momentum. Tools like xPrivo allow advanced users to self-host their AI interfaces and connect them to local models running on their own hardware using frameworks like Ollama. This represents the pinnacle of AI privacy, as no data ever leaves the user’s physical machine. As consumer hardware—specifically GPUs and NPU-enabled laptops—becomes more powerful, the need to send data to the cloud for processing will likely diminish.

The broader implication for the industry is a looming "trust deficit." If mainstream providers continue to prioritize data harvesting over user confidentiality, they risk a mass exodus of high-value users, including legal professionals, medical researchers, and corporate executives who deal with sensitive intellectual property. The rise of "sovereign AI" is no longer just a niche interest for privacy advocates; it is becoming a business necessity in an era where data is both a company’s greatest asset and its most significant liability.

Conclusion

The 2026 data leak serves as a watershed moment for the AI industry. It exposed the fragility of the "wrapper" economy and the hidden costs of "free" or convenient AI services. As the technology matures, users are being presented with a spectrum of choices: from the absolute anonymity of Internxt and Duck.ai to the hardware-verified security of Confer and the feature-complete privacy of Venice.

The ultimate protection against data exposure is not found in a better privacy policy, but in the selection of tools that are architecturally incapable of betrayal. Whether through local storage, hardware isolation, or IP proxying, the next generation of AI tools is proving that intelligence does not have to come at the expense of intimacy. For the modern user, the goal is clear: to utilize the transformative power of AI without becoming a permanent resident of a corporate data lake.