As the global community navigates the complexities of February 2026, artificial intelligence has transitioned from a burgeoning technological frontier into a formidable and sophisticated instrument for deception. Advanced generative models have reached a level of maturity where synthetic media—including video, audio, and text—is now virtually indistinguishable from reality. This technological leap has empowered bad actors and organized fraud syndicates to deploy deepfakes, voice clones, and hyper-personalized narratives at an unprecedented scale. Financial institutions, multinational corporations, and private individuals are currently confronting a paradigm shift where the fundamental pillars of digital trust are eroding. What previously necessitated significant human labor and specialized resources is now executed through autonomous, intelligent systems that adapt, learn, and refine their deceptive tactics in real-time, creating a persistent and evolving threat landscape.

The Technological Evolution of Deception: 2023–2026

The trajectory of AI-driven fraud has moved with startling velocity over the past three years. In 2023, the primary concern focused on the "hallucinations" of large language models and the relatively crude nature of early deepfake videos. However, by 2024, the landscape shifted as fraudsters began successfully impersonating high-level executives to authorize fraudulent wire transfers. By late 2025, the integration of multimodal AI—systems capable of processing and generating text, image, and voice simultaneously—allowed for the creation of "synthetic identities" that could bypass traditional Know Your Customer (KYC) protocols with ease.

In the current environment of 2026, the primary threat is no longer the isolated deepfake but the "multimodal assault." This strategy involves a cohesive, multi-layered attack where a target is first contacted by a realistic AI-generated email, followed by a phone call featuring a perfectly cloned voice of a known associate, and finally "sealed" via a high-definition deepfake video conference. Each layer of the interaction reinforces the credibility of the others, making it nearly impossible for the average employee or consumer to detect the fabrication.

Chronology of the AI Fraud Crisis

To understand the gravity of the current situation, it is essential to trace the key milestones that led to this era of hyper-realistic deception:

- Early 2024: The Proof of Concept Phase. Initial reports emerged of "vishing" (voice phishing) attacks using AI to mimic the voices of family members in distress. While effective, these attacks were often limited by audio latency and unnatural cadences.

- Late 2024: The Corporate Breach. A major multinational firm reported a loss of $25 million after an employee in their finance department attended a video call with what appeared to be the Chief Financial Officer and several other staff members. All participants on the call, except the victim, were AI-generated deepfakes.

- Mid-2025: The Rise of Autonomous Agents. Fraudsters began deploying reinforcement learning agents. Unlike static scripts, these AI agents could "pivot" during a conversation, sensing hesitation in a victim’s voice and adjusting their emotional tone to exert more pressure or provide more reassurance.

- February 2026: The Current State. AI agents now operate 24/7, managing thousands of simultaneous fraudulent "relationships"—ranging from romance scams to sophisticated investment fraud—without human intervention.

Quantifying the Impact: Data and Economic Consequences

The economic ramifications of AI-driven deception are profound. According to recent industry reports from global cybersecurity consortia, losses attributed to synthetic identity fraud and deepfake-authorized transactions are projected to exceed $450 billion globally by the end of 2026. This represents a 400% increase from 2023 figures.

Furthermore, a study conducted by the International Monetary Forum (IMF) indicates that nearly 65% of financial institutions have reported a significant rise in "machine-to-machine" deception. This involves automated systems exploiting vulnerabilities in digital payment infrastructures, executing micro-transfers at such high frequencies that legacy detection algorithms struggle to flag them as suspicious. In the realm of retail banking, synthetic identities—which combine real stolen data with AI-generated biometrics—now account for an estimated 35% of all new account fraud, a statistic that highlights the inadequacy of traditional document-based verification.

Scaling Scams Through Intelligent Automation

One of the most insidious developments in 2026 is the use of AI agents to orchestrate entire scam operations. Large language models (LLMs) are now trained on massive datasets of leaked personal information and social media activity. This allows them to generate highly personalized phishing content that resonates with the specific interests, linguistic style, and social circle of the target.

These intelligent agents do not merely send messages; they maintain consistent personas across weeks or even months. In romance or investment fraud, these agents "remember" previous conversations, adapt to the victim’s emotional state, and use sophisticated psychological manipulation techniques. Because these systems are automated, a single bad actor can oversee a "fraud farm" that targets tens of thousands of individuals simultaneously, achieving a level of efficiency that was previously unthinkable.

Institutional and Societal Vulnerabilities

The threat extends beyond direct financial loss; it poses an existential risk to institutional integrity. Within the corporate sector, Business Email Compromise (BEC) has evolved into "Executive Impersonation 2.0." AI models can now recreate the specific decision-making styles and linguistic mannerisms of a CEO, making fraudulent internal directives appear entirely legitimate.

Supply chain security has also been compromised. Synthetic endorsements and forged digital credentials have allowed fraudulent entities to infiltrate trusted networks, leading to the delivery of counterfeit goods or the insertion of malware into secure systems.

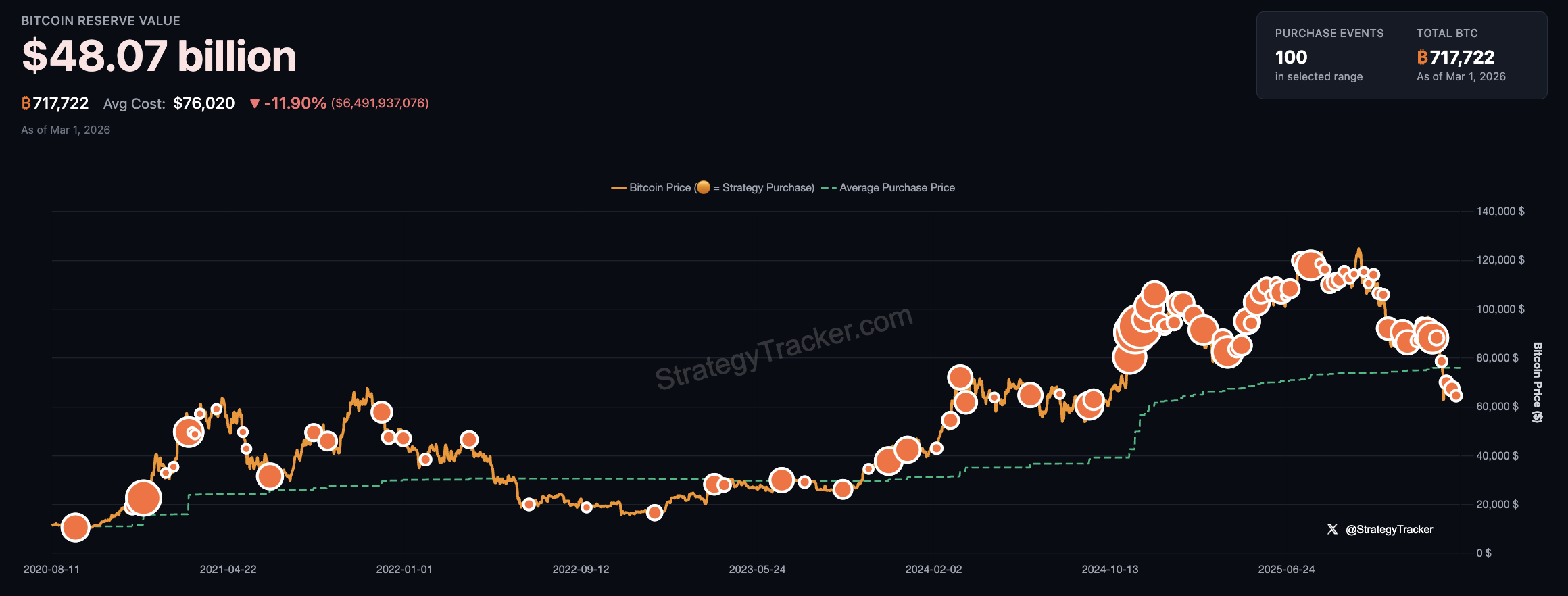

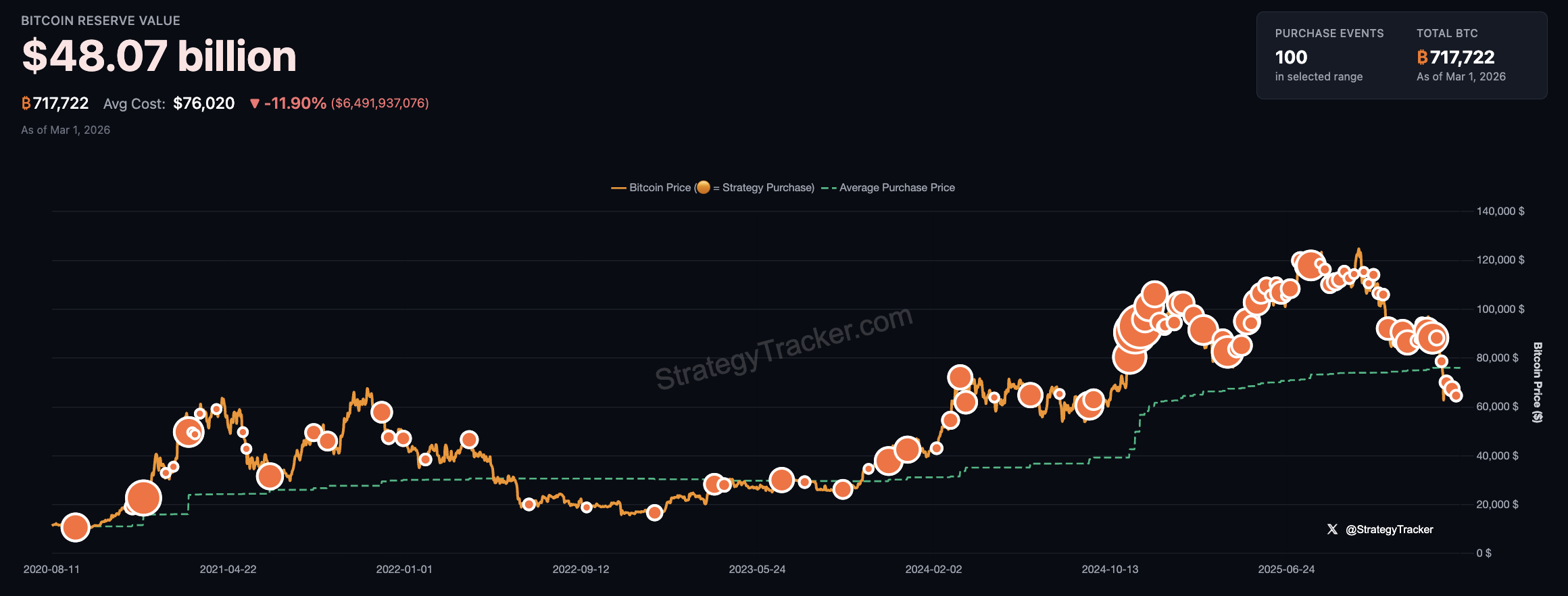

On a societal level, the proliferation of deepfakes has fueled disinformation campaigns that can destabilize markets in minutes. A fabricated video of a central bank official making an unexpected policy announcement or a deepfake of a political leader can trigger flash crashes in digital assets and traditional equities alike. The "liar’s dividend"—a phenomenon where real events are dismissed as "fake news" because the public no longer knows what to trust—further complicates the crisis.

Global Regulatory and Industry Responses

The response to this crisis has been varied and, in many cases, fragmented. The European Union’s updated AI Act of 2025 introduced mandatory watermarking for all synthetic content, yet enforcement remains a challenge when the content originates from jurisdictions with lax oversight.

In a statement released earlier this month, the Global Cyber Defense Alliance (GCDA) noted: "We are in an arms race where the offense currently has the advantage of speed and low cost. While legislative frameworks are essential, they cannot keep pace with a technology that evolves every week. Our focus must shift from reactive regulation to proactive, tech-driven defense."

In the United States, the Treasury Department has called for a "New Era of Verification," urging banks to move away from static passwords and toward continuous behavioral biometrics. However, civil liberties groups have expressed concerns regarding the privacy implications of such pervasive monitoring, creating a tension between security and individual rights.

Building Resilient Defenses Against Intelligent Adversaries

To counter these threats, organizations are adopting "Defense in Depth" strategies. This involves a combination of technical, behavioral, and procedural safeguards designed to catch deception at multiple points.

1. Real-Time Deepfake Detection

Advanced detection tools now analyze media for "micro-expressions" and "lighting inconsistencies" that are invisible to the human eye. In audio, spectrogram analysis can identify the subtle artifacts left by voice-cloning algorithms. However, as generative models improve, detection tools must constantly be retrained on the latest attack vectors.

2. Zero-Trust and Multimodal Verification

The "Zero Trust" architecture—where no user or request is trusted by default—has become the standard. High-value transactions now require multimodal verification, such as a combination of facial recognition, voice biometrics, and a secondary "out-of-band" confirmation through a separate, secure hardware device.

3. Cryptographic Provenance and Watermarking

One of the most promising long-term solutions involves cryptographic watermarking. By embedding imperceptible digital signatures into authentic media at the point of creation, organizations can verify the provenance of a video or audio clip. Blockchain-anchored attestations are also being explored to create an immutable record of media origins, although widespread adoption is still in its nascent stages.

4. Behavioral Anomaly Detection

AI is also being used as a defensive tool. Anomaly detection systems monitor user behavior and transaction patterns in real-time. If an executive who typically authorizes payments on a Monday suddenly requests a large transfer on a Sunday night via a video call, the system flags the interaction for manual review, regardless of how convincing the video appears.

Fostering a Culture of Verified Trust

As we look toward the future, the burden of defense also falls on the individual. Digital literacy has become a non-negotiable skill in 2026. Consumers are being taught to "verify then trust," questioning any unsolicited high-pressure communication and using independent channels to confirm identities.

Public-private partnerships are proving vital in this effort. Industry consortia are now sharing "threat intelligence" on emerging AI tactics, allowing banks to update their filters before a new scam variant becomes widespread. International standards for AI transparency could further harmonize these responses, reducing the "regulatory havens" that sophisticated fraudsters currently exploit.

Navigating the Secure Digital Future

The emergence of advanced AI-driven deception represents more than a technological hurdle; it is a fundamental challenge to the mechanisms of trust that underpin modern economies and social cohesion. The contest between sophisticated deception and vigilant defense will likely define the stability of the global financial system for the remainder of the decade.

The path forward requires proactive resilience. Organizations and individuals who anticipate these threats, adapt their protocols, and maintain a healthy skepticism will be best positioned to navigate this era. While AI has the potential to cause significant harm when used as an instrument of deception, it also holds the key to its own containment. Through the development of robust verification technologies and a global commitment to digital integrity, stakeholders can mitigate these risks and preserve the trust necessary for a functioning digital society. In an era where deception scales intelligently, authenticity must be a deliberate and well-defended effort.