Decentralized Autonomous Organizations, commonly known as DAOs, represent a radical shift in corporate and community governance by replacing traditional hierarchies with flat, token-based voting systems. These entities, which manage billions of dollars in digital assets, rely on the fundamental premise that token holders will collectively and transparently steer the direction of a protocol, its treasury, and its strategic evolution. However, a burgeoning crisis is emerging as advancements in generative artificial intelligence provide malicious actors with the tools to fabricate community consensus at an unprecedented scale. This phenomenon, often referred to as synthetic social proof, involves the deployment of AI-generated personas, automated bot networks, and engineered voting patterns to simulate widespread support for specific agendas, effectively hijacking the democratic processes that underpin the blockchain ecosystem.

The core of the issue lies in the reliance of DAOs on visible participation metrics—such as forum engagement, Discord activity, and on-chain voting—to determine the "will of the people." When these metrics are compromised by AI agents capable of mimicking human nuance, the legitimacy of decentralized governance is called into question. As AI models become more sophisticated, the distinction between a genuine community movement and a manufactured campaign is becoming increasingly blurred, posing an existential threat to the integrity of decentralized finance (DeFi) and broader Web3 initiatives.

The Evolution of Governance Manipulation: A Chronology

The vulnerability of decentralized voting systems is not a new discovery, but the methods of exploitation have evolved significantly over the last decade. In the early stages of the blockchain movement, around 2016, the primary concern was "The DAO" hack, which focused on smart contract vulnerabilities rather than social manipulation. Following this, the industry saw the rise of "Sybil attacks," where a single user creates multiple wallet addresses to gain a disproportionate amount of influence in airdrops or voting.

By 2020 and 2021, the "DeFi Summer" era introduced governance tokens as a primary incentive. During this period, manipulation was largely financial, involving "whale" investors who used sheer capital to swing votes. However, the period between 2022 and 2024 has seen the integration of Large Language Models (LLMs) into the attacker’s toolkit. This marks the transition from "quantitative manipulation" (buying votes) to "qualitative manipulation" (shaping the narrative).

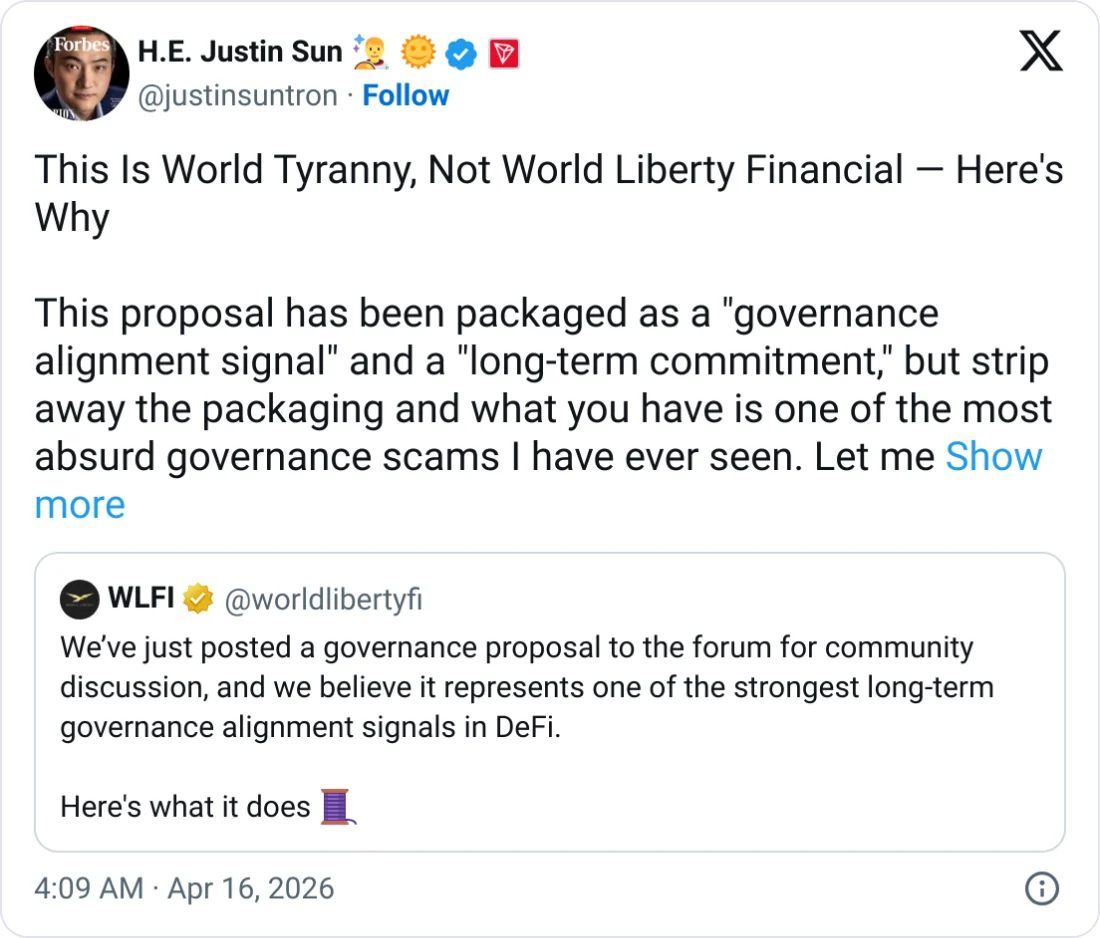

In late 2023, several prominent protocols began noticing anomalies in their governance forums. Proposals that previously saw minimal engagement were suddenly flooded with hundreds of unique, articulate comments supporting controversial changes. Unlike the repetitive spam of previous years, these comments addressed specific technical points, countered critics with tailored arguments, and utilized the jargon specific to each community. Investigative efforts by community moderators revealed that many of these accounts had no prior history but possessed fully fleshed-out digital identities, signaling the arrival of AI-orchestrated astroturfing.

Mechanisms of Synthetic Manipulation and AI Deployment

The barrier to entry for manipulating a DAO has been lowered dramatically by the availability of open-source AI models. Malicious actors now employ a multi-layered approach to simulate organic momentum. The process typically begins with the creation of a "fleet" of AI personas. Using generative models, attackers can produce thousands of unique profile pictures and biographies, ensuring that no two accounts look the same.

Beyond visual identity, LLMs are used to generate context-aware commentary. These agents are programmed to monitor DAO forums and Discord channels in real-time. When a target proposal is posted, the AI fleet can be triggered to provide supportive arguments, share memes to build cultural rapport, and "like" or "upvote" specific sentiments to ensure they remain at the top of social feeds. This creates a "bandwagon effect," where real human participants, seeing a perceived majority, are more likely to align with the manufactured consensus or remain silent if they disagree.

In the voting booth, the manipulation becomes more technical. Machine learning algorithms are now used to optimize "Sybil" distribution. Instead of voting from a thousand wallets simultaneously—which would be easily flagged by on-chain analytics—AI scripts vary the timing, the amount of tokens held in each wallet, and the voting history to mimic the behavior of diverse, independent users. Some sophisticated actors even use graph neural networks to study the detection patterns used by DAO security tools, allowing them to refine their evasion tactics and blend into the "noise" of legitimate network activity.

Supporting Data: The Scale of the Governance Ecosystem

To understand the stakes of this manipulation, one must look at the sheer volume of capital governed by DAOs. According to data from DeepDAO, a leading analytics platform, the total treasury value across the DAO ecosystem has fluctuated between $20 billion and $40 billion over the past year. Top-tier DAOs, such as those governing Uniswap, Arbitrum, and Optimism, manage billions of dollars in assets and make decisions that affect hundreds of thousands of users.

Data suggests that voter apathy is a significant enabler of AI manipulation. In many major DAOs, less than 10% of eligible tokens are typically used in any given vote. When human participation is low, a relatively small but highly coordinated AI botnet can easily achieve a majority. Furthermore, a 2023 study on social media bot detection indicated that advanced LLMs can now bypass traditional CAPTCHAs and "proof-of-humanity" puzzles with an accuracy rate exceeding 80%, making the task of filtering out synthetic actors increasingly difficult for community moderators.

The economic incentive is equally compelling. For an attacker, the cost of running a sophisticated AI campaign might be a few thousand dollars in server fees and API costs. If that campaign successfully redirects a $10 million treasury grant toward an insider project, the return on investment is astronomical. This lopsided "attack-to-defense" ratio is driving a surge in governance-related security incidents.

Industry Responses and the Rise of Defensive Technologies

The decentralized community has not remained idle in the face of these threats. Various "Proof of Personhood" (PoP) solutions have emerged to counter the rise of synthetic identities. Projects like Worldcoin use biometric data to verify human uniqueness, while Gitcoin Passport aggregates "stamps" of social and on-chain activity to create a reputation score. The goal is to move away from "one token, one vote" and toward systems that prioritize verified human actors.

In addition to identity solutions, DAOs are implementing more complex voting mechanisms. Quadratic voting, for instance, makes each additional vote from the same entity exponentially more expensive, thereby diminishing the power of "whales" and Sybil clusters. Conviction voting, another emerging model, requires voters to "lock" their tokens over time, rewarding long-term commitment rather than sudden surges in participation—a move specifically designed to thwart transient bot swarms.

Leading blockchain security firms have also begun offering "Governance Monitoring" services. These services use machine learning to analyze voting patterns and social media sentiment in tandem. By identifying "clusters" of accounts that always act in perfect synchronization or exhibit identical linguistic patterns, these tools can provide real-time alerts to DAO members. In several instances, these alerts have led to the pausing of votes and the disqualification of manipulated outcomes, though such interventions remain controversial in the context of "unstoppable" decentralized protocols.

Broader Impact and Ethical Implications for Decentralized Governance

The erosion of trust caused by synthetic consensus extends beyond financial loss. The very "moral authority" of a DAO is at risk. If a community believes that its decisions are being dictated by an algorithm rather than collective human intent, the incentive to participate vanishes. This leads to a "death spiral" of engagement, where high-quality contributors and developers leave the ecosystem, leaving behind a vacuum that is filled by more bots and bad actors.

Furthermore, the rise of manufactured consensus threatens the principle of "forking." In the blockchain world, if a community disagrees with a direction, they can "fork" the protocol to start a new version. However, if an AI campaign can successfully mask its presence and maintain a facade of broad agreement, the minority who disagree may never realize they are being manipulated by a single entity, preventing the natural corrective mechanism of a fork.

There is also a geopolitical dimension to this trend. State actors and large corporate entities are increasingly viewing DAOs as testing grounds for broader social manipulation techniques. The strategies refined in the crypto space—using AI to steer sentiment and capture governance—could eventually be applied to national elections, corporate shareholder votes, and public discourse.

Conclusion: Defending the Authenticity of Collective Intelligence

The battle against artificial consensus is fundamentally a struggle to preserve the "human" in human-centric technology. As generative AI continues to advance, the technical safeguards of yesterday—simple filters and basic wallet tracking—will no longer suffice. The future of decentralized autonomous organizations depends on a multi-pronged approach that combines robust on-chain identity verification, sophisticated behavioral analytics, and a cultural shift toward valuing verifiable reputation over sheer volume of activity.

The survival of the DAO model rests on its ability to prove that it is, in fact, a community of people rather than a collection of scripts. If the industry fails to address the threat of synthetic social proof, decentralized governance risks becoming little more than an elaborate theater where the most advanced algorithm, rather than the best idea, wins. Proactive design choices, transparency mandates, and a commitment to authentic participation are the only ways to ensure that the promise of the "many" is not silenced by the "machine."