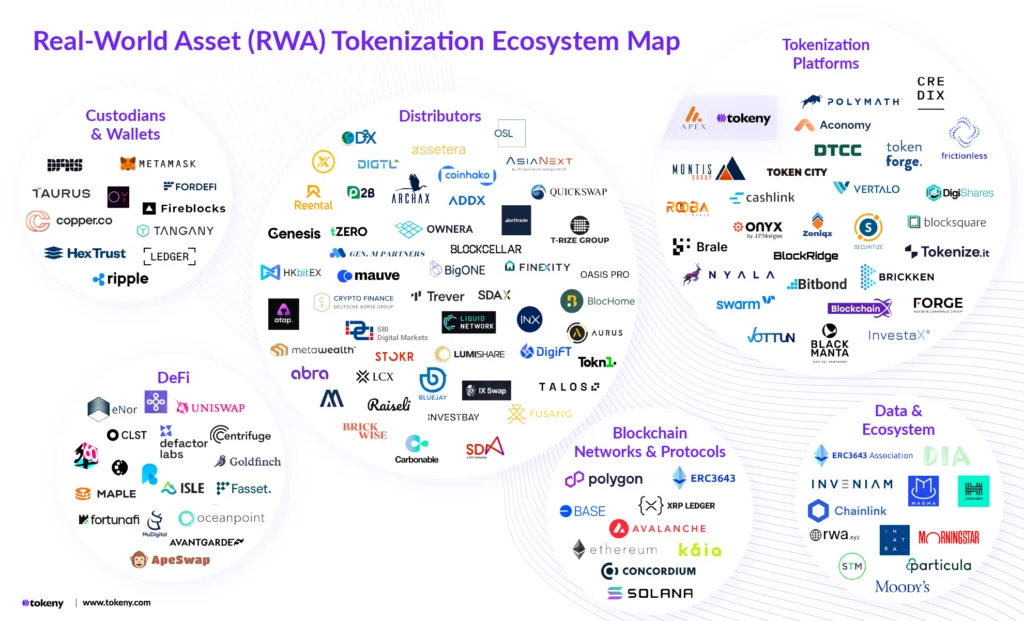

The landscape of international finance is currently undergoing a dual transformation, driven by the rapid adoption of sovereign digital currencies and the simultaneous emergence of sophisticated artificial intelligence. While blockchain technology promises transparency and efficiency, a shadowy threat has materialized in the form of hyper-realistic deepfakes. These AI-generated facsimiles, capable of mimicking the voice, appearance, and mannerisms of world leaders and financial regulators, are now infiltrating the high-stakes world of global cryptocurrency negotiations. Diplomats and financial architects, who once relied on the inherent trust of face-to-face or verified digital communications, now face a reality where the lines between authenticity and synthetic deception have become dangerously blurred.

The Emergence of Synthetic Envoys in the Virtual Diplomatic Arena

As the global financial system shifts toward digitization, the traditional "smoke-filled room" of diplomacy has been replaced by encrypted video conferencing and virtual summits. These digital forums, while convenient, have inadvertently created a playground for malicious actors utilizing generative AI. The concept of a "synthetic envoy"—a non-existent or impersonated negotiator—is no longer a theoretical concern but a pressing security challenge.

In a typical high-level negotiation, representatives from central banks and regulatory bodies discuss the technicalities of cross-border payment rails, stablecoin reserves, and anti-money laundering (AML) frameworks. The stakes are immense; a single policy shift regarding the "Digital Euro" or "Digital Yuan" can shift billions of dollars in market capitalization. Deepfake technology allows an adversary to inject a convincing digital doppelgänger into these sessions. This impersonator can propose radical regulatory shifts, endorse specific private-sector crypto assets, or intentionally sow discord between allied nations. The democratization of AI tools means that these capabilities are no longer restricted to nation-state actors but are increasingly available to sophisticated cyber-criminal syndicates.

A Chronology of AI-Driven Diplomatic Interference

The trajectory of deepfake interference in financial diplomacy has moved with alarming speed over the last decade. Understanding this timeline is essential for recognizing the gravity of the current threat.

- 2014–2017: The Foundation of GANs. The development of Generative Adversarial Networks (GANs) by researchers provided the mathematical framework for AI to "learn" how to recreate realistic imagery and audio. Early applications were crude and easily detectable.

- 2018–2020: The Rise of Voice Cloning. Financial institutions began reporting "vishing" (voice phishing) attacks where AI-cloned voices of CEOs were used to authorize fraudulent wire transfers. This marked the first successful use of synthetic media in high-value financial crime.

- 2021–2022: Preliminary Diplomatic Infiltration. Reports surfaced of "deepfake" politicians contacting European officials. These early attempts often featured static backgrounds and slight lag, allowing vigilant staffers to identify the ruse.

- 2023–Present: Real-Time Interactive Deepfakes. The current era features low-latency, high-definition synthetic video that can react in real-time to questions. This is the technology currently threatening crypto negotiations, where the "liveness" of the participant is no longer a guarantee of their identity.

Case Studies: Real-World Incidents on the Crypto Frontlines

Two recent incidents have sent shockwaves through the international regulatory community, serving as a wake-up call for the vulnerability of digital finance.

In the first instance, a virtual working group focused on the integration of the digital euro was targeted. An AI-generated version of a senior economic advisor to the European Union appeared on a secure call. The deepfake was meticulously crafted, utilizing hours of public testimony to perfect the advisor’s cadence and vocabulary. The impostor argued passionately for reduced oversight on decentralized finance (DeFi) protocols, suggesting that "innovation must precede regulation." The deception was nearly successful, with several delegates taking notes for policy drafting. It was only when the impostor used a specific American idiom—untypical of the European official—that a senior staffer grew suspicious and initiated an out-of-band verification, discovering the real advisor was in a different meeting entirely.

A second, more public incident occurred during an Asia-Pacific Economic Cooperation (APEC) sub-committee meeting on sustainable energy. A deepfake of a prominent regional economist was used to lobby for the expansion of unregulated crypto-mining operations in specific jurisdictions. The synthetic figure used sophisticated technical jargon to mask the environmental impact of the proposal. The session was halted when a participant noticed that the background lighting on the economist’s video feed did not match the local time of day in their supposed location. These cases demonstrate that while the AI is becoming perfect, human intuition and environmental inconsistencies remain the primary lines of defense.

Technical Mechanics: How Deepfakes Manipulate Financial Sentiment

The creation of a diplomatic deepfake is a multi-stage process that leverages Open Source Intelligence (OSINT). Because public officials frequently give speeches, participate in televised interviews, and post on social media, there is a wealth of high-quality data available to train machine learning models.

Perpetrators use this data to map the "facial landmarks" of a target—the specific way their mouth moves when speaking or how their eyes crinkle. Once the model is trained, a "puppeteer" can use a standard webcam to drive the movements of the high-resolution digital skin. In the context of crypto diplomacy, this is particularly dangerous. If a deepfake of a Central Bank Governor were to "leak" a video endorsing a specific blockchain protocol, it could trigger a "flash pump" or a market-wide crash before the official could issue a denial. The speed of crypto markets, which operate 24/7, leaves little room for the slow-moving process of official verification.

Data and Impact: The Economic Cost of Synthetic Deception

The financial implications of AI impersonation are staggering. According to industry reports from cybersecurity firms, identity fraud losses involving synthetic media increased by over 300% between 2022 and 2023. In the cryptocurrency sector, where "sentiment" often drives "value," the impact is amplified.

- Market Volatility: A fraudulent announcement regarding a "Bitcoin ban" or a "Stablecoin mandate" by an impersonated official could result in billions of dollars in liquidations within minutes.

- Erosion of Trust: If diplomats cannot trust the identity of their counterparts, international cooperation on crucial issues like the "Travel Rule" (which tracks crypto transfers) will stall, creating loopholes for money laundering and terrorist financing.

- Resource Diversion: Nations are now being forced to divert significant portions of their digital transformation budgets away from infrastructure and toward "identity assurance" technologies.

Official Responses and the Search for a Solution

The international community has begun to react, though many experts argue the response is lagging behind the technology. The Financial Action Task Force (FATF) and the G20 have both highlighted the risks of "technological misuse" in their recent communiqués.

Proposals for safeguarding the future of digital diplomacy include:

- Cryptographic Identity Verification: Moving away from simple video feeds toward a system where every participant must sign their video stream with a unique, blockchain-based private key. This creates an "immutable audit trail" of identity.

- Multi-Modal Biometrics: Implementing real-time "liveness detection" that requires participants to perform random tasks—such as turning their head or reading a specific, non-scripted phrase—to ensure they are not a pre-recorded or AI-driven loop.

- The "Human-in-the-Loop" Protocol: Mandating that all high-stakes crypto negotiations be shadowed by secondary verification teams who communicate via separate, analog channels to confirm the validity of the digital session.

Broader Implications: The Future of Truth in a Virtual World

The threat of deepfakes in crypto negotiations is a microcosm of a larger societal challenge: the death of visual evidence. For decades, "seeing was believing." In the age of generative AI, visual and auditory data are no longer sufficient proof of reality.

For the cryptocurrency ecosystem, which was founded on the principle of "don’t trust, verify," this is a moment of reckoning. The irony is that the very technology being discussed—blockchain—may be the only solution to the AI threat. By creating decentralized identity (DID) frameworks, the international community can move toward a "trustless" form of diplomacy where identity is verified by mathematics rather than appearance.

As Dr. Pooyan Ghamari and other visionaries have noted, the intersection of AI and finance requires a new era of "digital vigilance." The goal is not to retreat from digital diplomacy, but to fortify it. The future of the global economy depends on the ability of its leaders to distinguish between a genuine partner and a synthetic shadow. Only through a combination of robust regulatory frameworks, advanced cryptographic tools, and a heightened sense of skepticism can the world navigate the turbulent waters of AI-driven financial negotiations. The integrity of the global crypto-economy depends not just on the strength of the code, but on the authenticity of the humans who govern it.