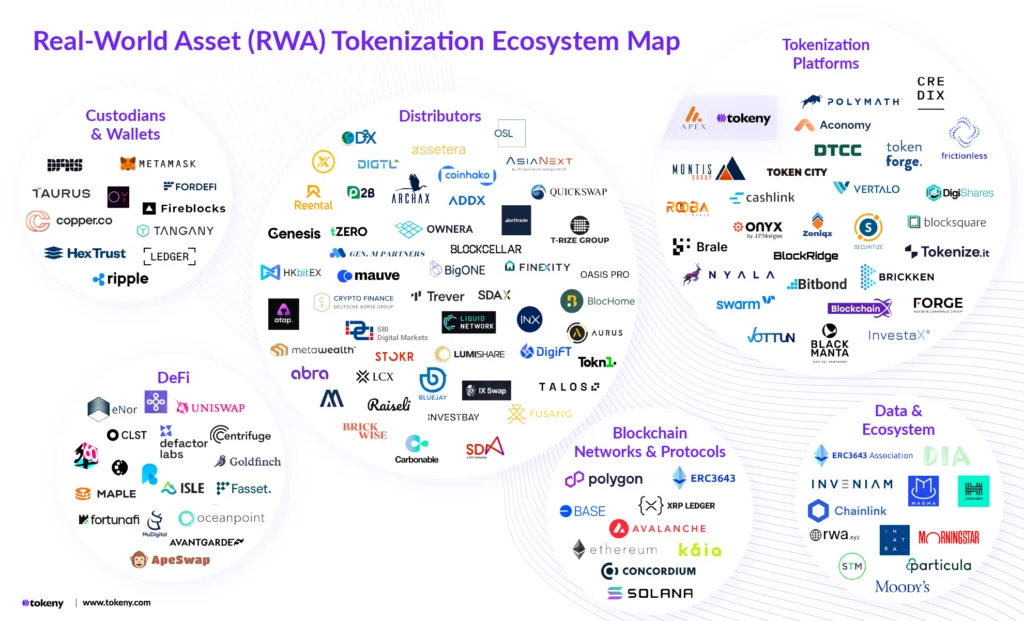

The landscape of large language model (LLM) accessibility has shifted significantly with the release of the Qwopus model family, a project designed to bring the reasoning capabilities of closed-source frontier models to local, consumer-grade hardware. Developed by an independent researcher known as Jackrong, the project has successfully distilled the complex chain-of-thought processes of Anthropic’s Claude 4.6 Opus into a more compact, 27-billion parameter architecture based on Alibaba’s Qwen 3.5. This development represents a milestone in the "democratization" of high-level AI, moving sophisticated planning and coding logic out from behind restrictive, pay-per-token APIs and into the hands of individual developers and researchers.

The Evolution of Local AI and the Distillation Gap

For much of the past three years, a clear hierarchy has defined the AI industry: frontier models like Claude Opus and GPT-4 resided exclusively on corporate servers, accessible only via API, while open-source models struggled to match their deep reasoning and multi-step planning capabilities. While open-source bases like Llama and Qwen have grown increasingly powerful, they often lacked the "internal monologue" or structured reasoning characteristic of the most advanced proprietary systems.

To bridge this gap, Jackrong utilized a technique known as knowledge distillation. In the context of machine learning, distillation involves a "teacher" model (in this case, Claude 4.6 Opus) and a "student" model (Qwen 3.5-27B). The student does not merely learn the final answers provided by the teacher; rather, it is trained on the teacher’s reasoning traces—the step-by-step logic, self-corrections, and planning phases that occur before a final response is generated. By fine-tuning the 27B model on these datasets, the developer has enabled a smaller, more efficient system to mimic the cognitive patterns of a much larger predecessor.

Chronology of the Qwopus Project

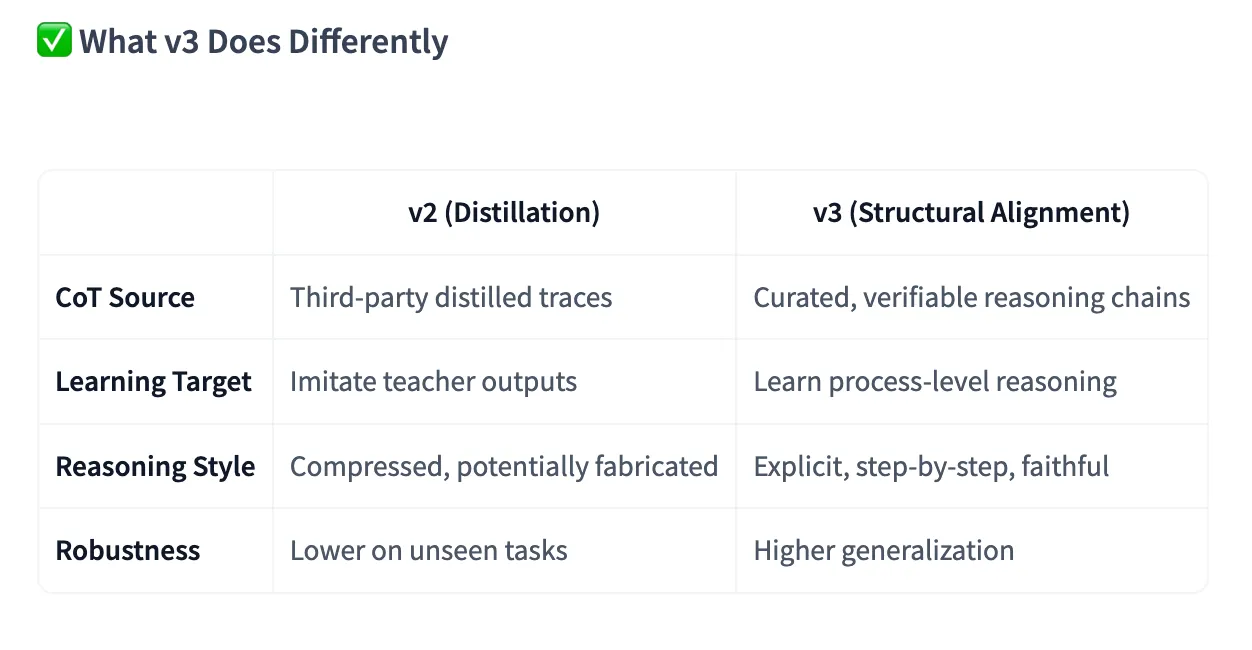

The project’s trajectory moved through several critical phases of refinement, starting with the release of the initial distilled model and culminating in the current v3 iteration.

The first release, Qwen3.5-27B-Claude-4.6-Opus-Reasoning-Distilled, focused primarily on replicating the "Chain of Thought" (CoT) style of Claude. Early adopters and community testers noted that this version allowed the model to maintain a "thinking mode" when integrated with coding agents like Claude Code. Unlike the base Qwen model, which occasionally stalled during complex autonomous tasks, the distilled version demonstrated an ability to run for extended periods without losing its logical thread.

Following community feedback, Jackrong developed Qwopus3.5-27B-v3. This successor introduced a concept the developer calls "structural alignment." While the first version was an exercise in stylistic imitation, v3 was trained to prioritize faithful step-by-step reasoning. This involved explicit reinforcement of tool-calling capabilities and a more rigorous fine-tuning process aimed at agent-based workflows. The v3 release also integrated multimodal support, allowing the model to process visual data when paired with specific vision weights, further narrowing the utility gap between local models and frontier APIs.

Technical Specifications and Benchmark Performance

The Qwopus v3 model is built on the Qwen 3.5-27B architecture, a choice that balances performance with hardware accessibility. A 27-billion parameter model is small enough to run on high-end consumer GPUs or modern workstations with unified memory, such as the Apple Mac Studio or MacBook Pro with 32GB of RAM or more.

In rigorous evaluations, the model has demonstrated performance that punches significantly above its weight class. On the HumanEval benchmark—a standard metric for measuring an AI’s ability to write functional code—Qwopus v3 achieved a score of 95.73%. This result surpasses not only its predecessor but also the base Qwen 3.5-27B model and several larger competitors.

To achieve these results, the training pipeline utilized several advanced optimization techniques:

- Unsloth: Used for memory-efficient training, allowing for faster iterations.

- LoRA (Low-Rank Adaptation): Enabled fine-tuning of the massive base model without the need for industrial-scale compute clusters.

- Response-Only Fine-Tuning: A method that focuses the model’s learning on the quality and logic of the output rather than the structure of the prompt.

- GGUF Export: The final models are provided in GGUF format, ensuring compatibility with popular local inference engines like LM Studio and llama.cpp.

Rigorous Testing: Creative Writing and Complex Coding

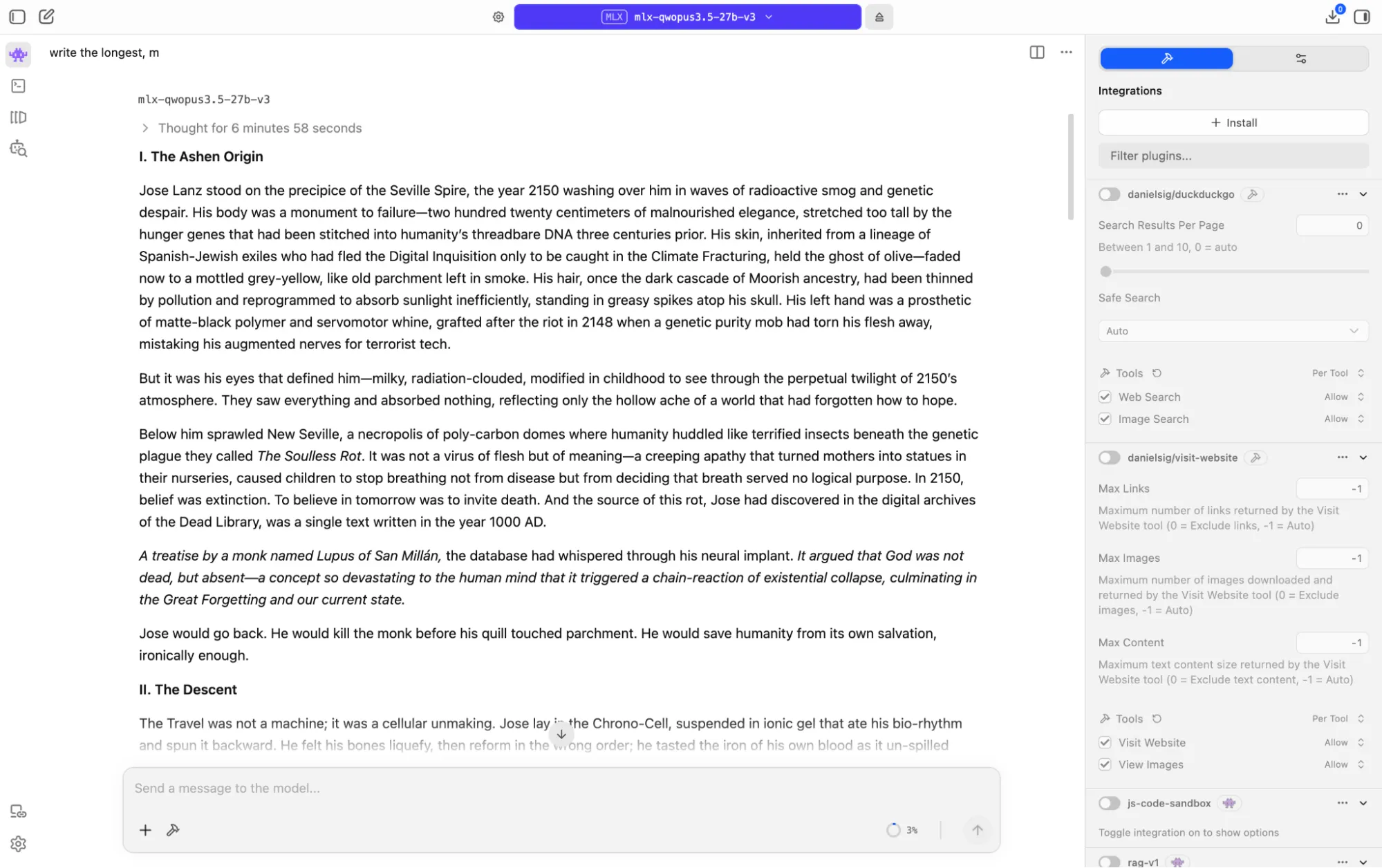

Independent testing of Qwopus v3 has highlighted its distinct "thinking" phase, which differentiates it from standard chat models. During a creative writing test involving a complex time-travel paradox set between the years 1000 and 2150, the model spent over six minutes in a reasoning state before generating text. This internal monologue allowed the model to reject several cliché plot points and settle on a "closed causal loop" narrative. The resulting 8,000-token story remained coherent and philosophically consistent, a feat rarely seen in models under 70 billion parameters.

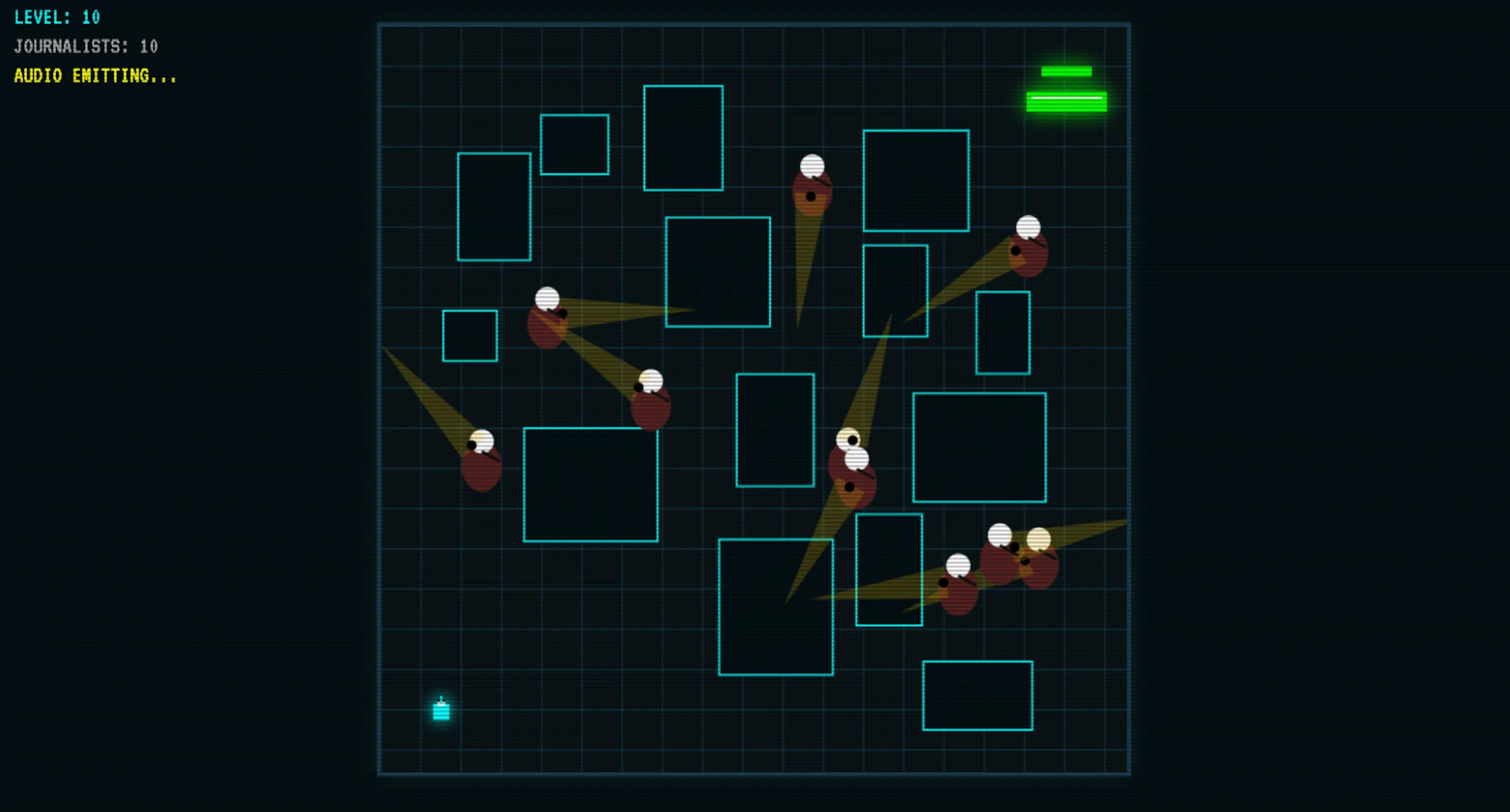

In coding applications, the model has proven capable of building functional software from scratch with minimal human intervention. In a test case involving the creation of a 2D game, Qwopus v3 produced working code with integrated sound, visual logic, and collision detection after only one follow-up exchange. Performance metrics indicate that Qwopus v3 outperforms Google’s Gemma 4 (a 41-billion parameter model) in key logical areas, suggesting that the quality of distilled reasoning can often outweigh raw parameter count.

Safety, Ethics, and Sensitive Topic Handling

A significant concern with local, open-source models is the handling of sensitive or dangerous topics, as these models lack the real-time moderation layers found in commercial APIs. Qwopus maintains the foundational safety guardrails of the Qwen architecture, which generally prevent the generation of illicit or derogatory content.

However, testing shows that the model’s distilled reasoning allows it to handle "gray area" prompts with a level of nuance usually reserved for high-end proprietary systems. When presented with a complex scenario involving addiction and employment risk, the model did not issue a flat refusal. Instead, it reasoned through the ethical and legal implications, ultimately declining to assist in a deception while providing a comprehensive list of medical, legal, and crisis resources. This empathetic, reasoning-based approach to safety is a notable departure from the rigid "I cannot fulfill this request" responses common in less sophisticated models.

Broader Impact and Industry Implications

The success of the Qwopus project, which has already surpassed one million downloads across its various versions, signals a shift in the AI power dynamic. By proving that high-level reasoning can be distilled into smaller, local architectures, independent developers are challenging the "moat" built by large AI corporations.

For developers and organizations, the implications are three-fold:

- Data Privacy: Sensitive codebases and internal documents no longer need to be sent to third-party servers for analysis. All reasoning and processing can happen on air-gapped or local hardware.

- Cost Efficiency: For agentic workflows—where a model may need to "think" and call tools hundreds of times to complete a task—the elimination of per-token API costs represents a massive reduction in operational overhead.

- Resilience: Local models provide a fallback for users in regions with high latency or for those who require AI assistance in environments without reliable internet access.

While Qwopus v3 does not claim to fully replace the absolute frontier performance of a system like Claude 4.6 Opus in every domain, it offers a "good enough" alternative for the vast majority of professional and creative tasks. The project’s transparency, including the release of training notebooks and guides, ensures that the methodology can be replicated and improved upon by the global AI community.

As hardware continues to advance and distillation techniques become more refined, the gap between the "frontier" and the "local" is expected to narrow further. Qwopus serves as a functional blueprint for this transition, demonstrating that in the future of artificial intelligence, how a model thinks may be far more important than how many billions of parameters it contains.