The concept of trust, traditionally the bedrock of human civilization and economic exchange, is undergoing a fundamental transformation as generative artificial intelligence (AI) permeates the global digital landscape. Dr. Pooyan Ghamari, a Swiss economist and visionary, posits that we have entered an era defined by "synthetic trust"—a phenomenon where AI-generated content and interactions simulate human-like authenticity to either strengthen or destabilize social and market structures. As these technologies evolve from experimental tools to enterprise-grade infrastructure, the dual capacity of generative AI to act as both a bridge and a wedge has become a focal point for policymakers, economists, and technologists worldwide.

The Evolution of Generative AI: A Chronological Context

To understand the current state of synthetic trust, one must examine the rapid acceleration of generative technologies over the last decade. While the foundations of neural networks were laid decades ago, the current trajectory began in earnest in 2017 with the publication of the seminal paper "Attention Is All You Need" by Google researchers, which introduced the transformer architecture. This innovation allowed models to process data in parallel and understand context with unprecedented accuracy.

By 2020, the release of GPT-3 demonstrated that large language models (LLMs) could produce coherent, human-like text, sparking the first serious discussions about the automation of cognitive labor. The timeline reached a fever pitch in late 2022 and early 2023 with the public release of ChatGPT and DALL-E 2, which brought generative capabilities to the masses. According to data from McKinsey & Company, generative AI added an estimated $2.6 trillion to $4.4 trillion annually to the global economy across various use cases by the end of 2023. This rapid adoption has created a vacuum where traditional methods of verifying truth are struggling to keep pace with the speed of synthetic content generation.

The Alchemy of Authenticity: Building Synthetic Bonds

Generative AI’s ability to foster trust lies in its capacity for hyper-personalization and emotional mimicry. In the current market, virtual companions and AI-driven customer service interfaces are no longer merely reactive; they are predictive and empathetic. These systems utilize vast datasets to tailor advice and responses to individual psychological profiles, creating a sense of being "understood" that was previously exclusive to human interaction.

In the educational sector, the impact of synthetic trust is particularly profound. Simulated environments allow medical students, engineers, and pilots to practice high-stakes maneuvers in risk-free settings. By providing consistent, non-judgmental feedback, these AI tutors build a unique form of learner confidence. Research from the World Economic Forum suggests that AI-enhanced personalized learning could improve student outcomes by up to 30%, largely due to the rapport established between the student and the digital interface. This "alchemy of authenticity" transforms cold code into a dependable partner, facilitating deeper engagement in digital spaces.

Shadows in the Synthetic Mirror: The Erosion of Veracity

Despite its benefits, the same technology that builds trust can be weaponized to dismantle it. The proliferation of deepfakes—highly realistic synthetic media—has introduced a "liar’s dividend," where the mere existence of fake content allows individuals to dismiss real evidence as fabricated. In 2023 alone, the frequency of deepfake content online increased by an estimated 900%, according to cybersecurity firm Sensity.

The implications for political stability are severe. In various international elections, AI-generated audio and video have been used to misrepresent candidates, creating confusion among the electorate. Economically, the risk is equally potent. A single AI-generated image of a non-existent explosion near the Pentagon briefly caused a dip in the S&P 500 in May 2023, illustrating how quickly synthetic misinformation can trigger real-world financial volatility. For businesses, the erosion of consumer loyalty is a constant threat; if a brand’s digital presence can be perfectly spoofed, the cost of verifying legitimate communications rises exponentially.

Economic Ripples and the Reevaluation of Market Confidence

From the perspective of Dr. Pooyan Ghamari and other leading economists, generative AI necessitates a complete overhaul of traditional trust mechanisms. Markets thrive on information symmetry, but generative AI introduces a layer of "noise" that complicates decision-making. We are witnessing the emergence of a "Trust Tax"—the additional cost that businesses and consumers must pay to verify that an interaction is genuine.

Conversely, companies that successfully navigate this landscape stand to gain a "Trust Premium." By adopting radical transparency and verifiable AI practices, businesses can differentiate themselves in a crowded, synthetic market. Data from the 2024 Edelman Trust Barometer indicates that 63% of consumers are more likely to support brands that are transparent about their use of AI. This shift suggests that trust is becoming a tangible economic asset, one that requires proactive management and investment.

Official Responses and Regulatory Frameworks

Governments and international bodies have begun to react to the challenges of synthetic trust with unprecedented speed. The European Union’s AI Act, the world’s first comprehensive horizontal legal framework for AI, categorizes AI systems by risk level and mandates strict transparency for generative models. Under these rules, synthetic content must be clearly labeled, ensuring that citizens know when they are interacting with a machine.

In the United States, the Biden-Harris administration issued an Executive Order in late 2023 focused on "Safe, Secure, and Trustworthy AI." This order emphasizes the development of standards for "watermarking" AI-generated content to prevent fraud and disinformation. Similarly, the United Nations has established a High-level Advisory Body on Artificial Intelligence to foster global governance, recognizing that synthetic trust is a cross-border issue that cannot be managed by any single nation in isolation.

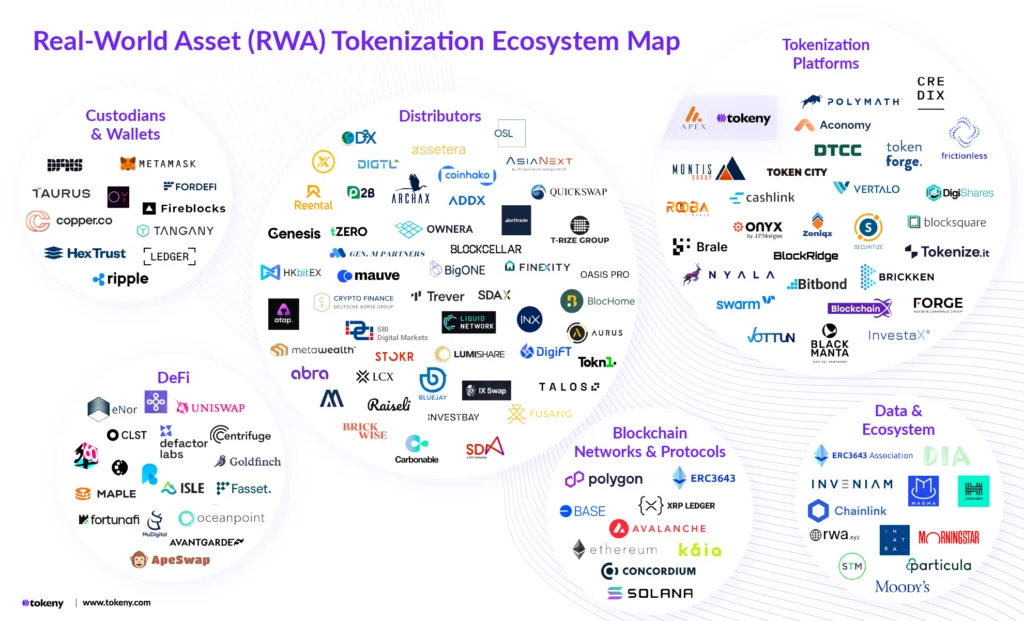

Technological Safeguards: The Role of Blockchain and Provenance

To mitigate the risks of deception, technologists are looking toward verifiable technologies to anchor synthetic content in reality. Dr. Ghamari highlights the synergy between generative AI and blockchain as a potential solution. By utilizing decentralized ledgers, the origin and history of a digital asset—its "provenance"—can be permanently recorded.

When an AI model generates an image or a report, a cryptographic hash of that output can be stored on a blockchain, serving as a digital birth certificate. This allows recipients to verify that the content has not been tampered with and originated from a trusted source. The Content Authenticity Initiative (CAI), a coalition of hundreds of tech and media companies, is currently developing these open standards to ensure that the digital world remains a place where truth can be discerned from fiction.

Brief Analysis of Implications: A Future Defined by Integrity

The long-term implications of synthetic trust extend beyond technology and economics into the very fabric of human psychology. If we become accustomed to the "perfection" of AI companions and the efficiency of synthetic interfaces, there is a risk that genuine human bonds—which are often messy, unpredictable, and inefficient—may be undervalued.

However, the visionary path forward is not one of rejection, but of integration. The goal is to create a "Trust-Empowered Future" where generative AI enhances human capabilities without compromising the integrity of our interactions. This requires a multi-faceted approach:

- Technical Literacy: Educating the public to critically evaluate digital content.

- Algorithmic Accountability: Holding developers responsible for the outputs of their models.

- Ethical Design: Prioritizing human-centric values in the development phase of AI.

Conclusion: Balancing Innovation with Integrity

As we stand on the brink of this new era, the challenge for society is to harness the immense potential of generative AI while safeguarding the foundations of trust. The economic benefits of AI-driven efficiency and personalization are too significant to ignore, yet the costs of a "post-truth" world are too high to bear.

By implementing robust ethical frameworks, leveraging verifiable technologies like blockchain, and fostering global cooperation, we can ensure that synthetic trust serves as a catalyst for progress. In the words of Dr. Ghamari, the objective is to cultivate a world where technology strengthens rather than supplants the genuine human bonds that have always been the true engine of society. The future of the global economy will depend not just on the intelligence of our machines, but on the integrity of the systems we build to govern them.